Imagine if local governments began looking at the histogram of net worth of their population every day, calculated in an opt-in/privacy-protecting way.

Not just the median, the whole distribution.

Not just the income, the savings minus debt.

Then acted every day to boost that.

Not just the median, the whole distribution.

Not just the income, the savings minus debt.

Then acted every day to boost that.

https://twitter.com/Dominic2306/status/1442932945300324354

How to execute on this?

1) Fintech apps already have much of this data

2) States like Estonia & Singapore have national ID systems via e-identity & Singpass that can serve as primary key

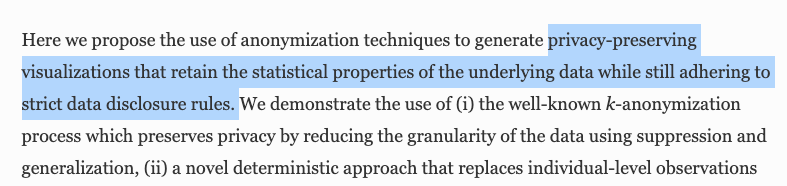

3) Histograms can be calculated in privacy-preserving way, eg: link.springer.com/article/10.114…

1) Fintech apps already have much of this data

2) States like Estonia & Singapore have national ID systems via e-identity & Singpass that can serve as primary key

3) Histograms can be calculated in privacy-preserving way, eg: link.springer.com/article/10.114…

Our current metrics for society are bad because they are easily gamed and aren't granular enough.

Society doesn't necessarily prosper as a whole if the stock market goes up. But it would if the (inflation-adjusted) net worth histogram was right-shifted.

Society doesn't necessarily prosper as a whole if the stock market goes up. But it would if the (inflation-adjusted) net worth histogram was right-shifted.

https://twitter.com/balajis/status/1250598918267650048

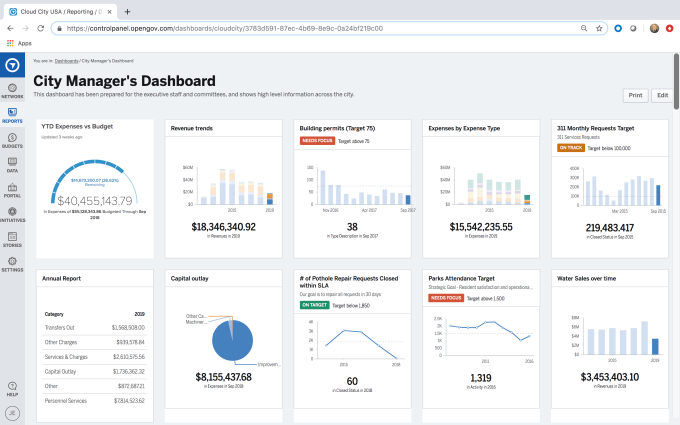

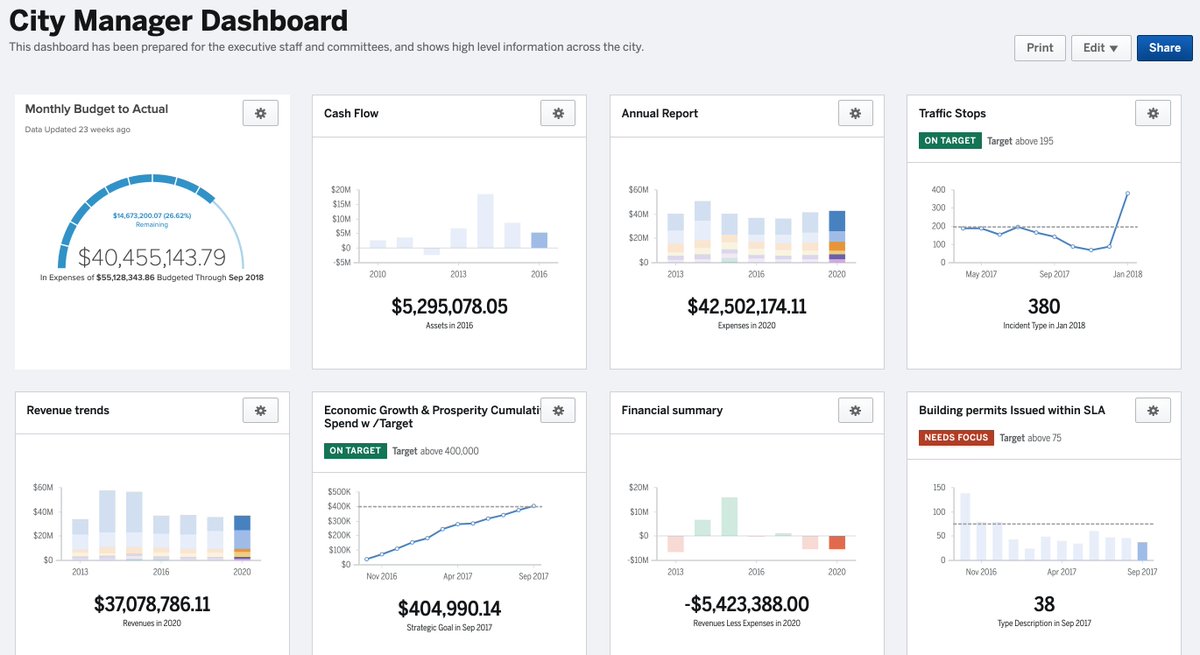

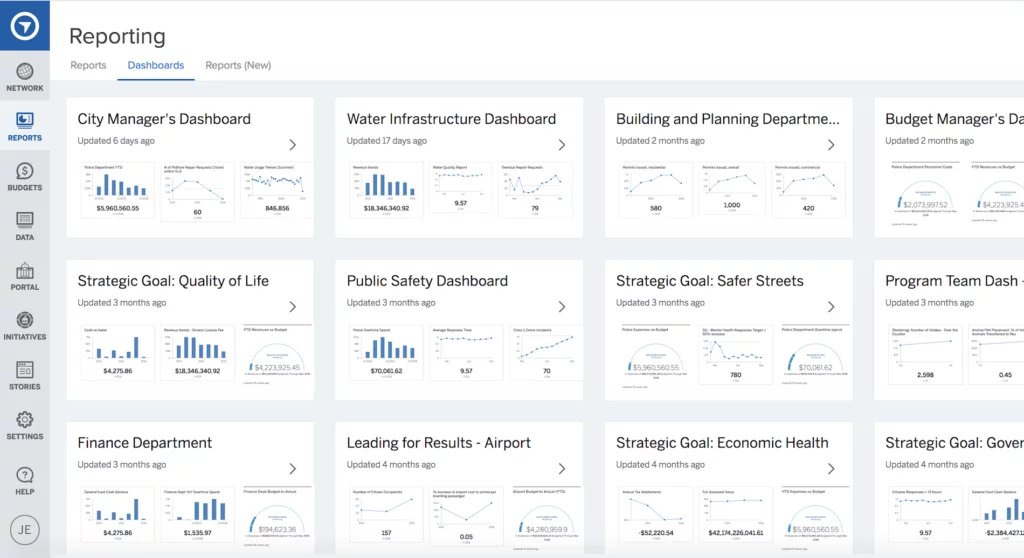

What OpenGov is doing is also relevant here. Perhaps integrate citizen data, on an opt-in, aggregated, and anonymized basis, into the city dashboard via a city app.

cc @FrancisSuarez @ZacBookman

opengov.com

cc @FrancisSuarez @ZacBookman

opengov.com

The OpenGov City Manager's Dashboard.

This could be extended to startup cities, and to online communities with large budgets like DAOs.

opengov.com/products/budge…

This could be extended to startup cities, and to online communities with large budgets like DAOs.

opengov.com/products/budge…

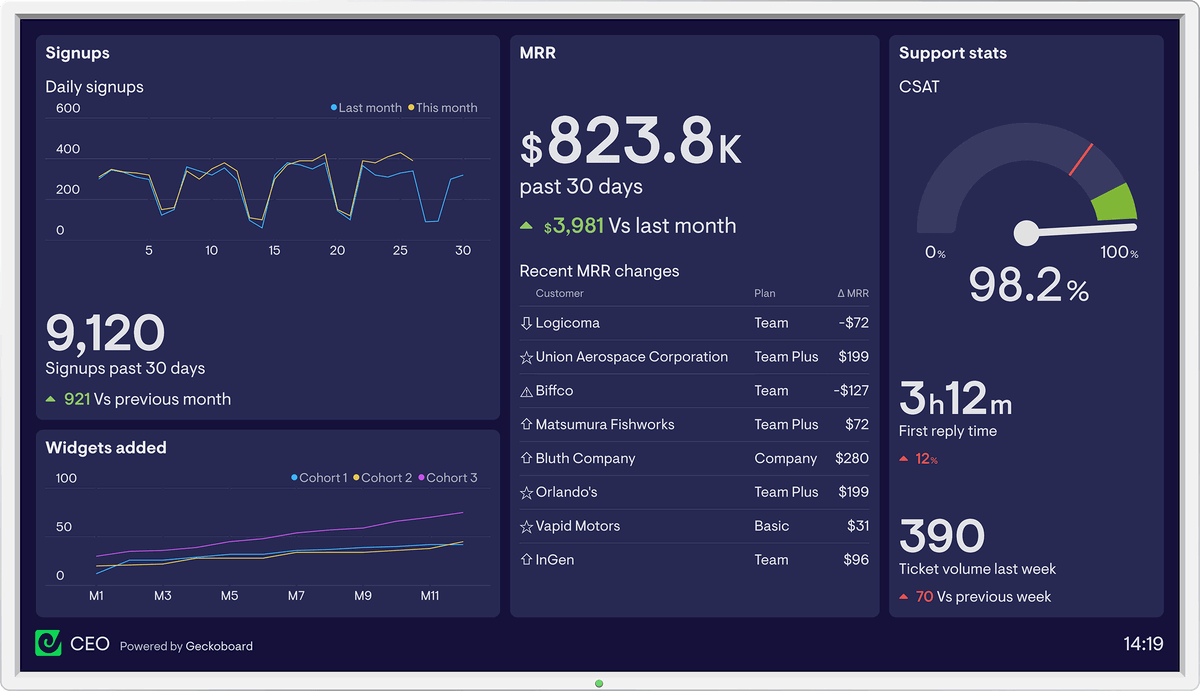

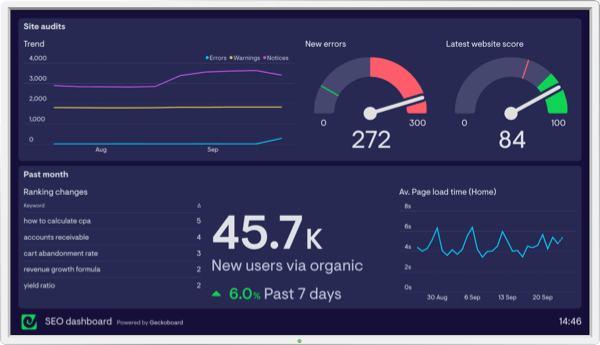

Just like a startup, you can pull many different kinds of analytics into a city dashboard. opengov.com/products/budge…

Btw, city managers are more like CEOs. Unlike mayors they are not elected. They run the city on a day-to-day basis. onlinempa.unc.edu/blog/city-mana…

• • •

Missing some Tweet in this thread? You can try to

force a refresh