IO economist + associate prof at @StanfordGSB. I use theory + data to study how risk, commitment and information flows interplay with (good) policy design.

How to get URL link on X (Twitter) App

This paper is for anyone who does an exercise like this:

This paper is for anyone who does an exercise like this:

Here's the gist: a common exercise in empirical econ is to analyze the effects of a policy change by taking obs of price/quantity pairs, fitting a demand curve and integrating under it to get a measure of welfare (e.g. consumer surplus; deadweight loss). Here's an example.

Here's the gist: a common exercise in empirical econ is to analyze the effects of a policy change by taking obs of price/quantity pairs, fitting a demand curve and integrating under it to get a measure of welfare (e.g. consumer surplus; deadweight loss). Here's an example.

Tl;dr: Heterogeneity matters when thinking about lockdown/re-opening policies. Diffs in concentrations of places where ppl encounter each other, diffs in industry, demographic (and co-morbidity) distributions, diffs in when the virus hit.

Tl;dr: Heterogeneity matters when thinking about lockdown/re-opening policies. Diffs in concentrations of places where ppl encounter each other, diffs in industry, demographic (and co-morbidity) distributions, diffs in when the virus hit.

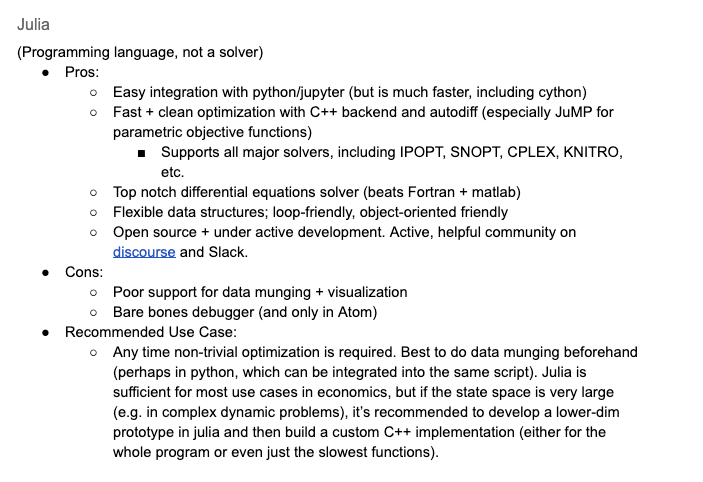

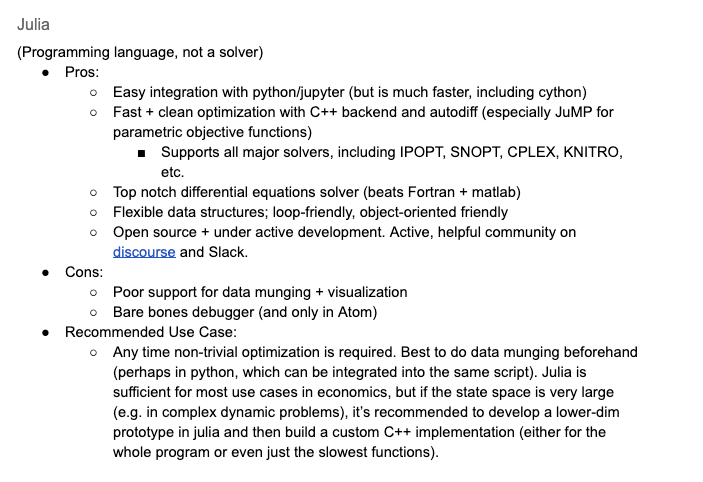

A few other things that it'd be great to have a 1-liner explaining (w/ links to more):

A few other things that it'd be great to have a 1-liner explaining (w/ links to more):

Motivation:

Motivation: