Slovakia (pop 5.5M) is attempting a mass COVID-19 screening campaign using rapid antigen tests. The public health community is going to learn a lot. Here's what I'm looking for...

1/

spectator.sme.sk/c/22519165/cor…

1/

spectator.sme.sk/c/22519165/cor…

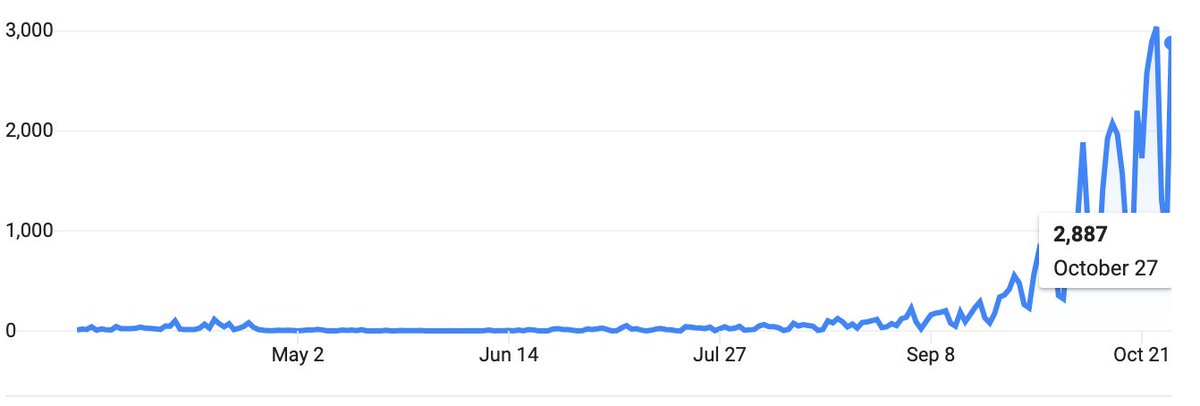

Slovakia, like Europe, is experiencing a rapid acceleration of infections & deaths, and is starting to use curfews & lockdowns.

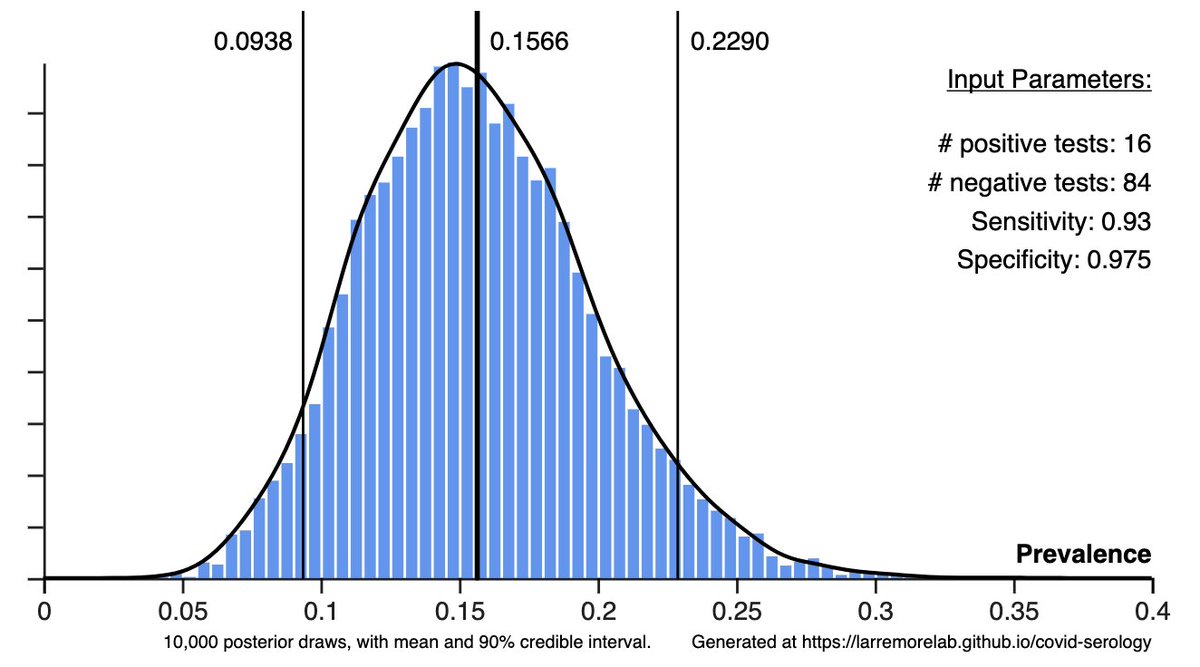

A pilot phase tested 140K people with rapid antigen tests, found 5.5K positives (4%).

They'll test the nation over next 2 weekends! Good idea?

2/

A pilot phase tested 140K people with rapid antigen tests, found 5.5K positives (4%).

They'll test the nation over next 2 weekends! Good idea?

2/

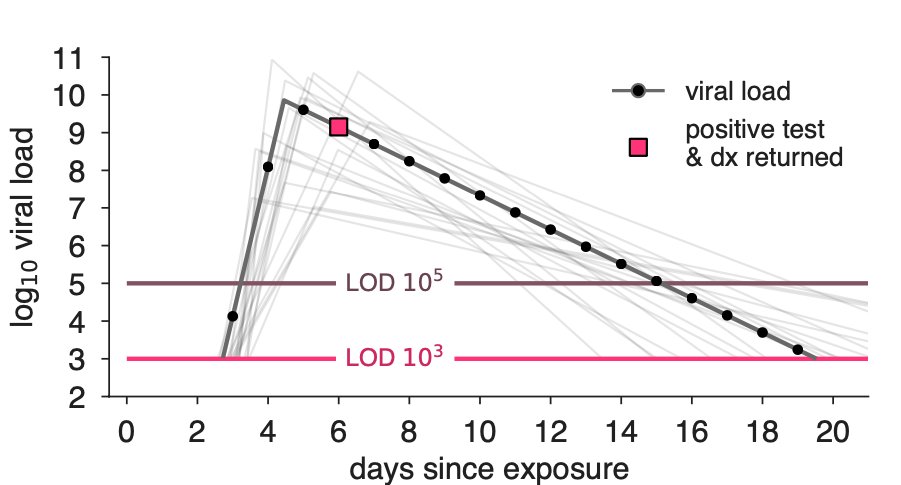

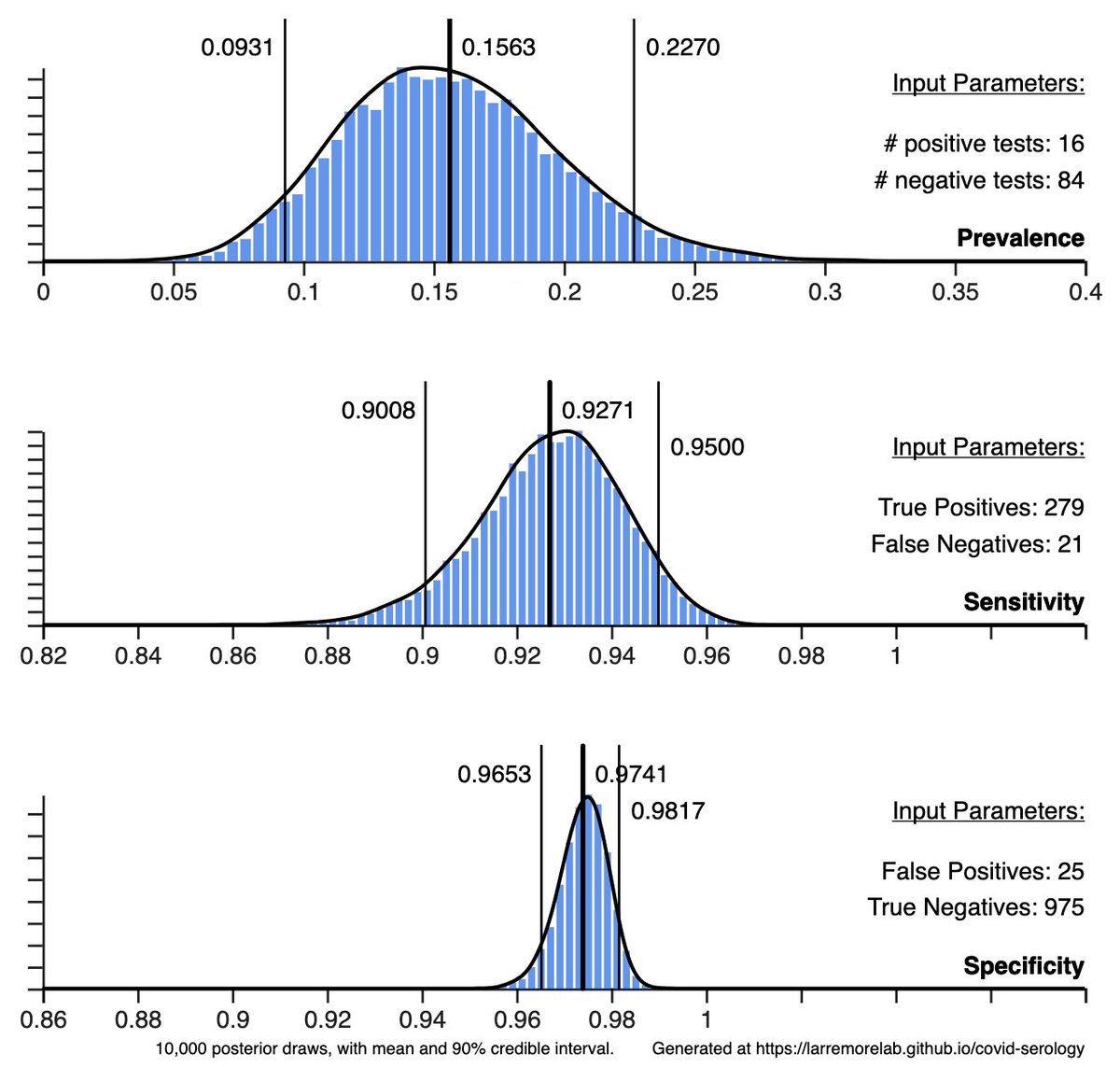

First, there are reasonable critiques of rapid Ag tests related to their sensitivity—do they miss too many infections?—and their specificity—do they falsely tell uninfected people that they're positive?

Re sensitivity: every broken transmission chain is a victory, BUT...

3/

Re sensitivity: every broken transmission chain is a victory, BUT...

3/

If a COVID+ person gets a false-negative test result, and then changes behavior—drops mask, goes to pub, etc—then the test result may have actually caused new infections!

The big Q: will screening break more transmission chains than it unintentionally creates? (I think so!)

4/

The big Q: will screening break more transmission chains than it unintentionally creates? (I think so!)

4/

The question above could be addressed through modeling, but it could also be sidestepped via better communication: a negative rapid screening test should not be a passport to 2019. Screening is a way to filter out many (not all) asymptomatic or presymptomatic infections.

5/

5/

In any case, if the Slovakian mass screening works, we should see a huge spike in cases from the screen, followed by a decline or slowing in cases thereafter due to cases averted.

@NoahHaber & ilk will have some ideas on how to do the causal epi properly. (Please, Noah?)

6/

@NoahHaber & ilk will have some ideas on how to do the causal epi properly. (Please, Noah?)

6/

What about false positives? If prevalence is low and specificity is imperfect, it's possibly for a large fraction (even a majority) of positives to be false positives.

Unnecessary isolation days from screening? Sounds bad, but not against a counterfactual lockdown.

7/

Unnecessary isolation days from screening? Sounds bad, but not against a counterfactual lockdown.

7/

If false positive screening tests cause 1 in every 2 isolation days to be unnecessary, a lockdown at 1% prevalence causes 99 in 100 isolation days to be unnecessary.

False positive tests are a problem, to be sure, but the counterfactual matters.

8/

False positive tests are a problem, to be sure, but the counterfactual matters.

8/

I'm looking forward to seeing the outcomes of Slovakia's mass screening using rapid antigen tests.

Some have argued rapid Ag tests could be a disaster, while others argue they could be a silver bullet. The proof will be in the epi curves. Let's see what the data say.

9/9

Some have argued rapid Ag tests could be a disaster, while others argue they could be a silver bullet. The proof will be in the epi curves. Let's see what the data say.

9/9

• • •

Missing some Tweet in this thread? You can try to

force a refresh