THREAD: Facebook relies on the public, researchers, & journalists to moderate their platform. But even blatantly violating content does not get removed.

On Sat. we reported weapons for sale in an antiquities trafficking group—it went as expected.

Facebook, this is unacceptable.

On Sat. we reported weapons for sale in an antiquities trafficking group—it went as expected.

Facebook, this is unacceptable.

https://twitter.com/CounteringCrime/status/1333460374058897408

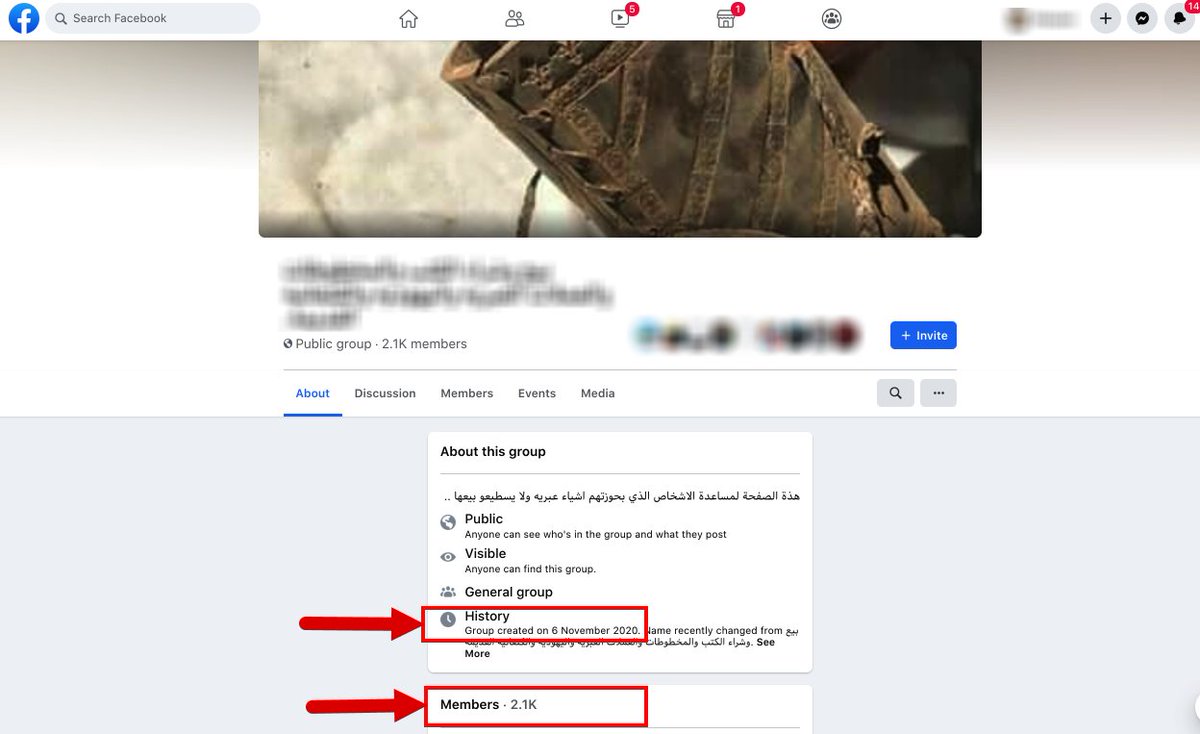

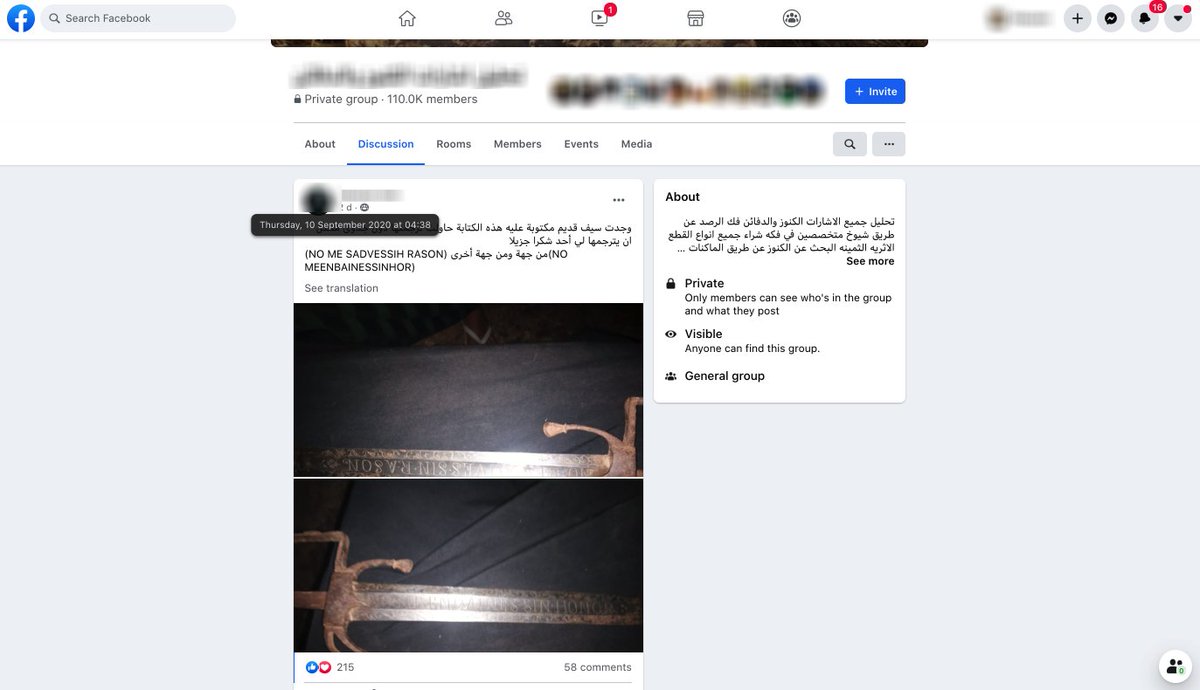

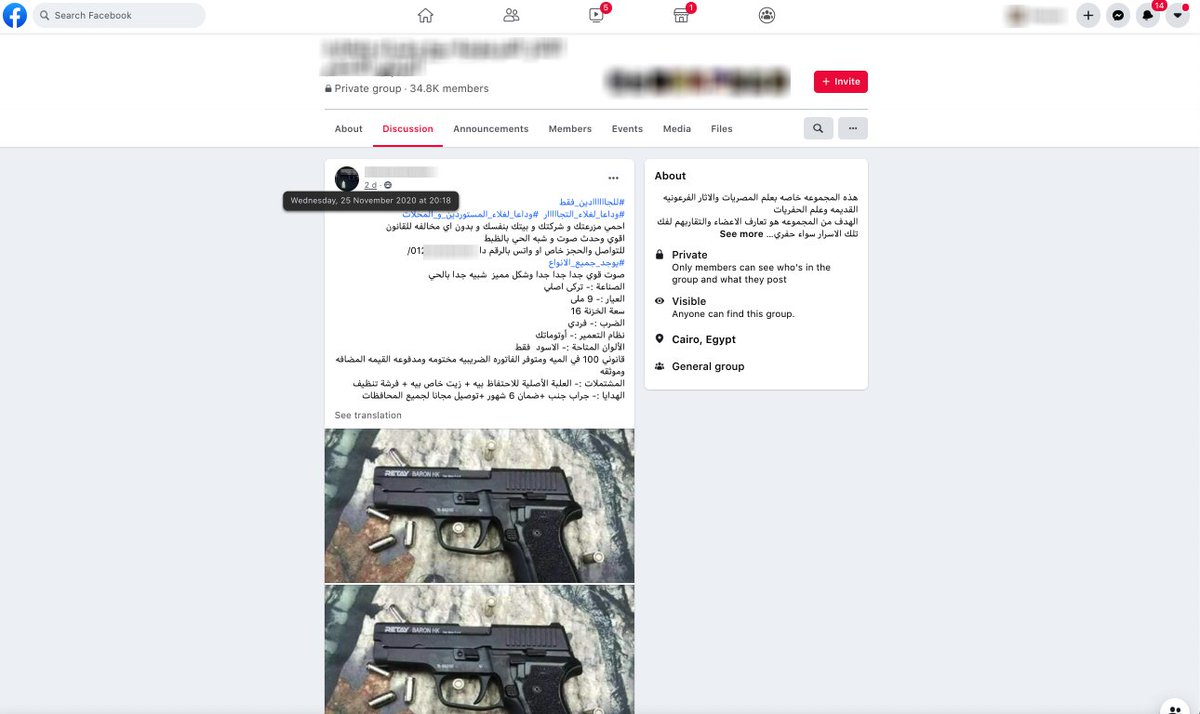

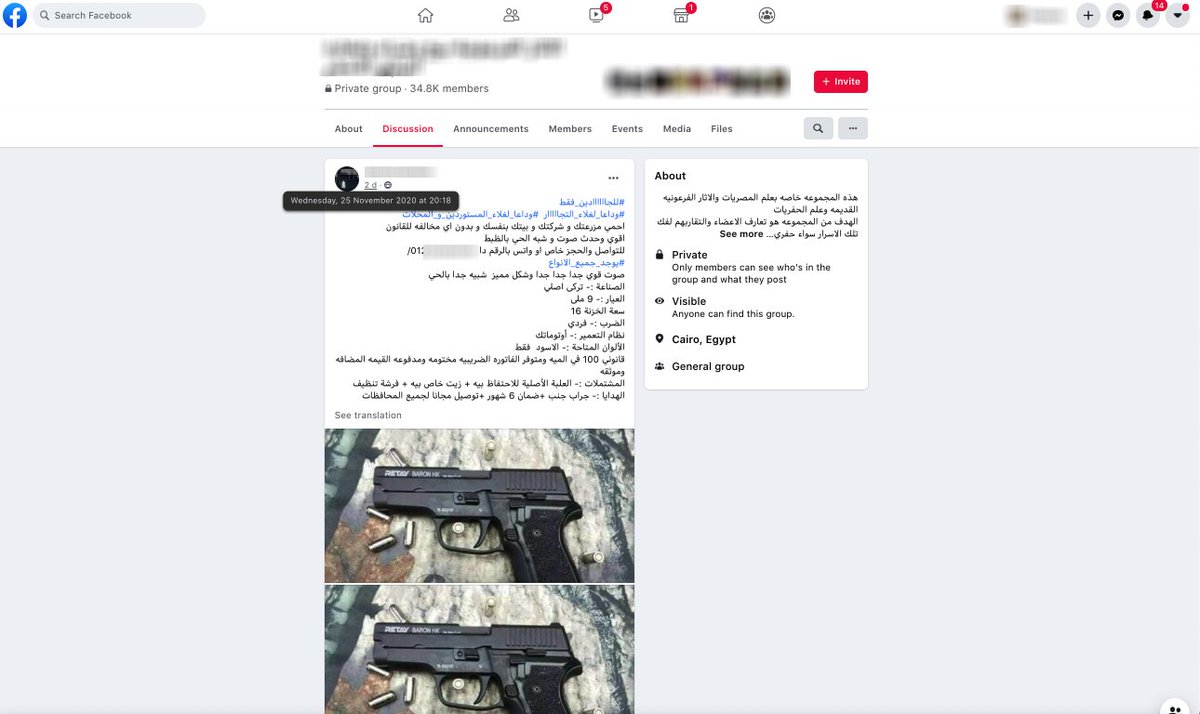

On Saturday, November 28, ATHAR found and reported an advertisement post in a Facebook antiquities trafficking group that was offering weapons for sale to anyone in Egypt.

The user, listed in Cairo, was offering delivery to any governorate.

The user, listed in Cairo, was offering delivery to any governorate.

Facebook's Community Standards explicitly ban content that "Attempts to buy, sell, trade, donate, gift or solicit firearms...between private individuals, unless posted by a real brick and mortar store, legitimate website, brand or government agency"

facebook.com/communitystand…

facebook.com/communitystand…

Facebook has had this "more strict" gun sale policy in place for years.

In 2016, Hayley Tsukayama (now a legislative activist for tech-funded Electronic Frontier Foundation), wrote in @washingtonpost about Facebook's policy change to make gun sales harder

washingtonpost.com/news/the-switc…

In 2016, Hayley Tsukayama (now a legislative activist for tech-funded Electronic Frontier Foundation), wrote in @washingtonpost about Facebook's policy change to make gun sales harder

washingtonpost.com/news/the-switc…

But earlier this year, @mattdrange @protocol found that weapons were still easy to buy and sell on the platform despite Facebook's policies. protocol.com/facebook-gun-s…

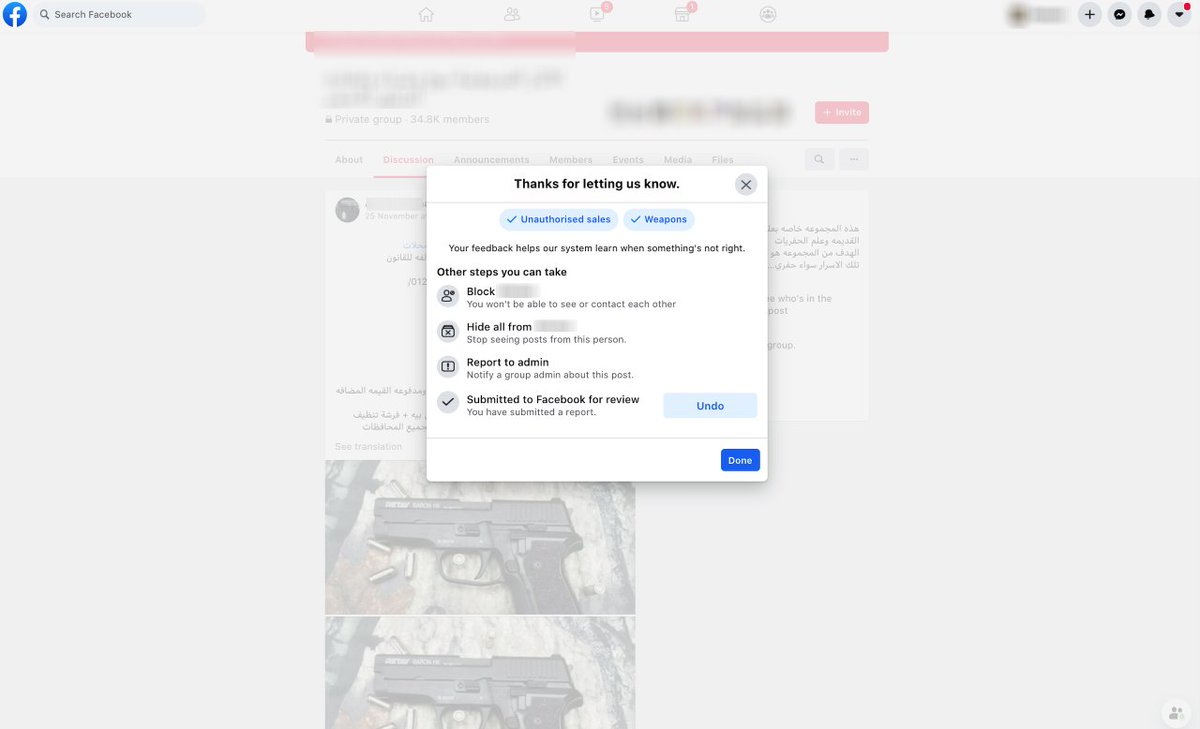

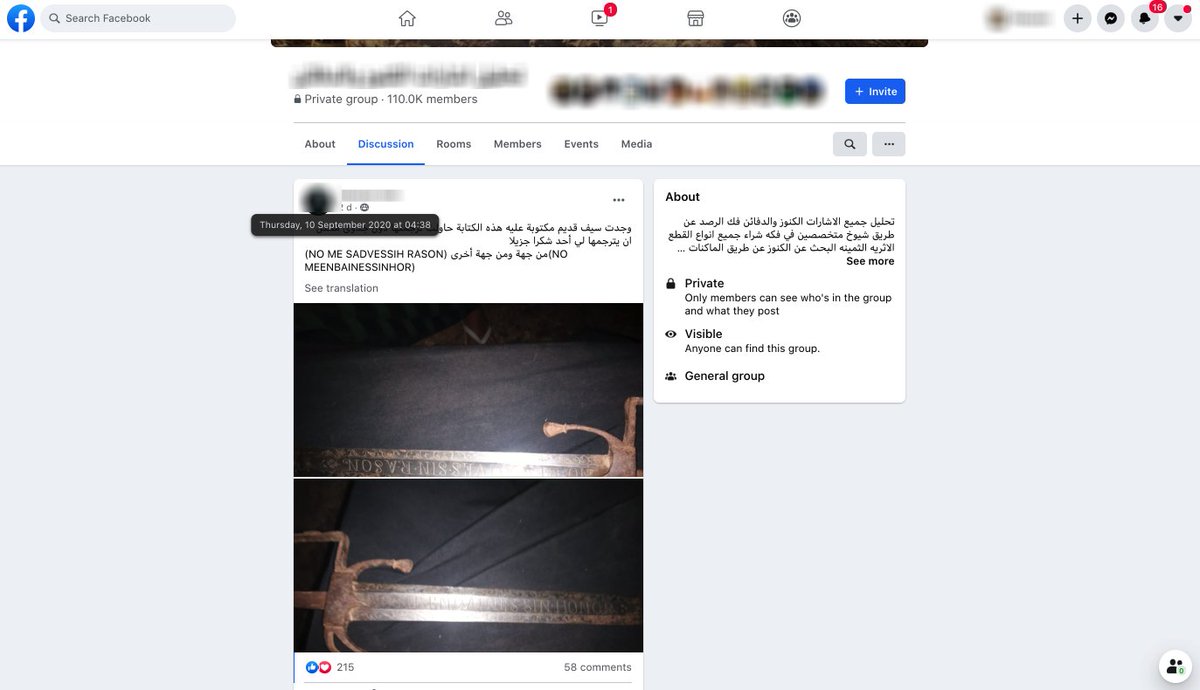

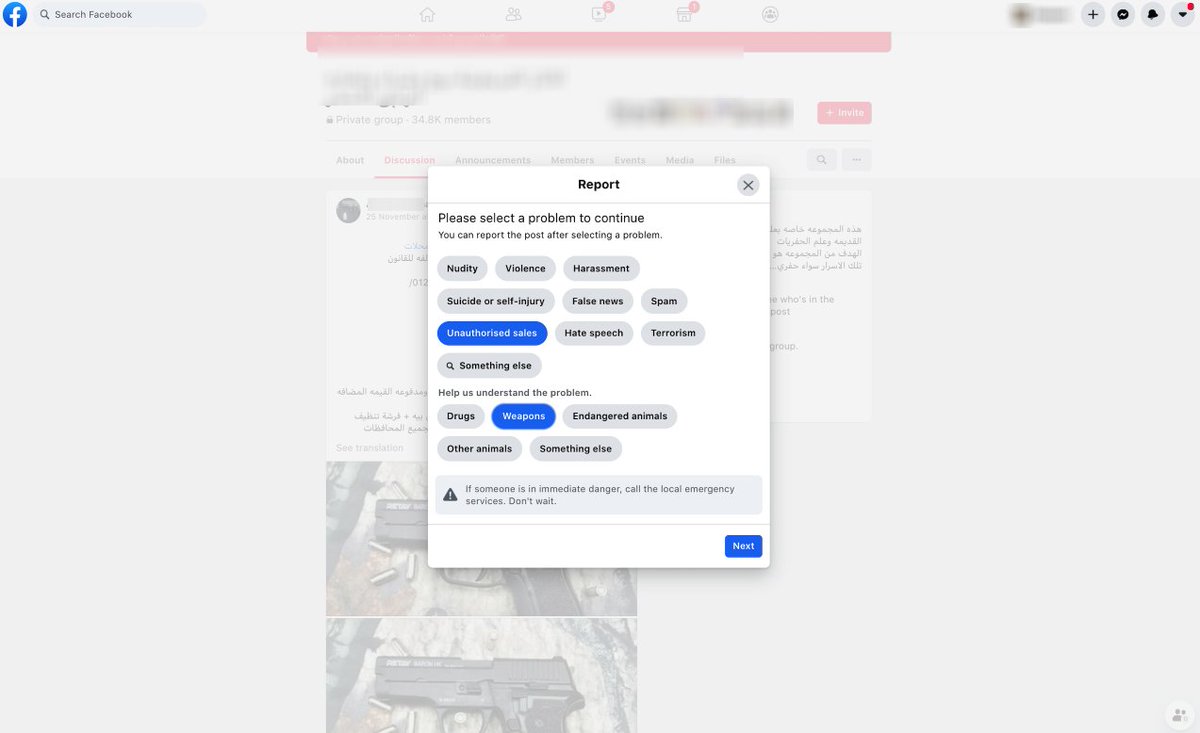

ATHAR reported the weapon offered in the antiquities trafficking group to Facebook through the platform's reporting mechanism.

Unlike trafficked antiquities, there is a dedicated reporting mechanism for weapons on Facebook. Presumably this should help the AI ID violating content

Unlike trafficked antiquities, there is a dedicated reporting mechanism for weapons on Facebook. Presumably this should help the AI ID violating content

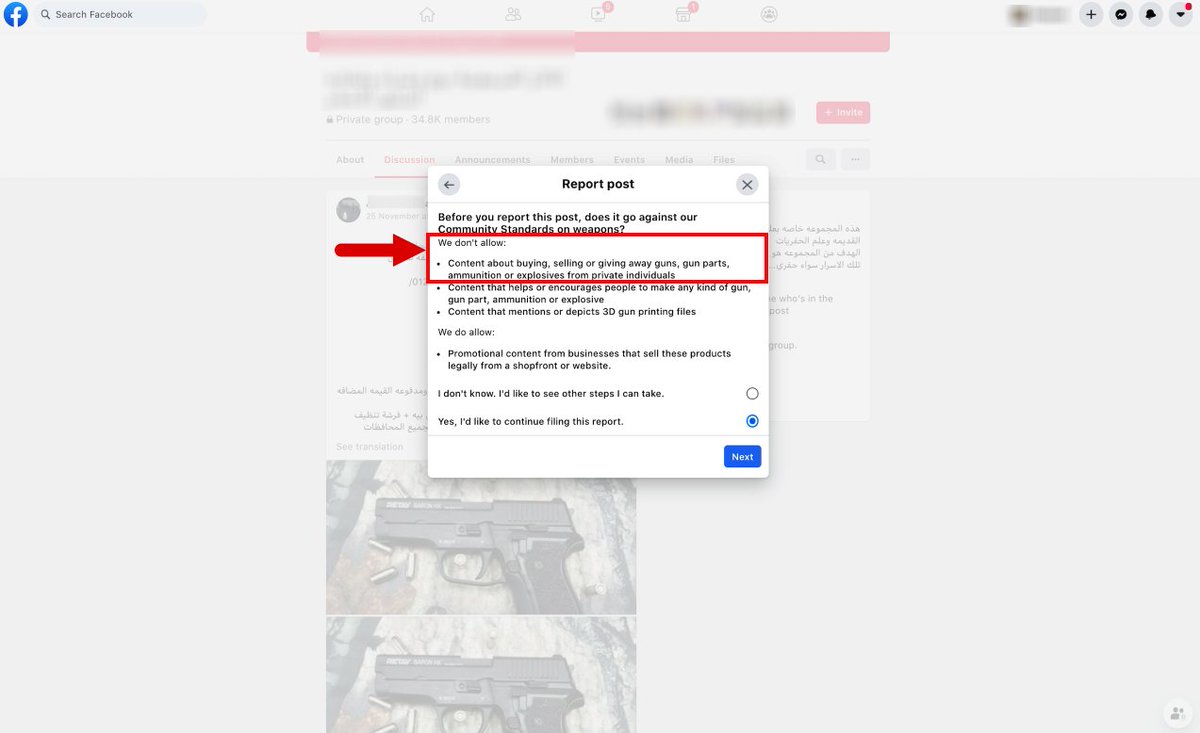

Based on Facebook's own listed policy, we confirmed that we did in fact want this post reported for a Community Standards violation.

After all, not only was the weapon sale a violation, it was in a group for antiquities trafficking, also against Facebook policies as of June '20.

After all, not only was the weapon sale a violation, it was in a group for antiquities trafficking, also against Facebook policies as of June '20.

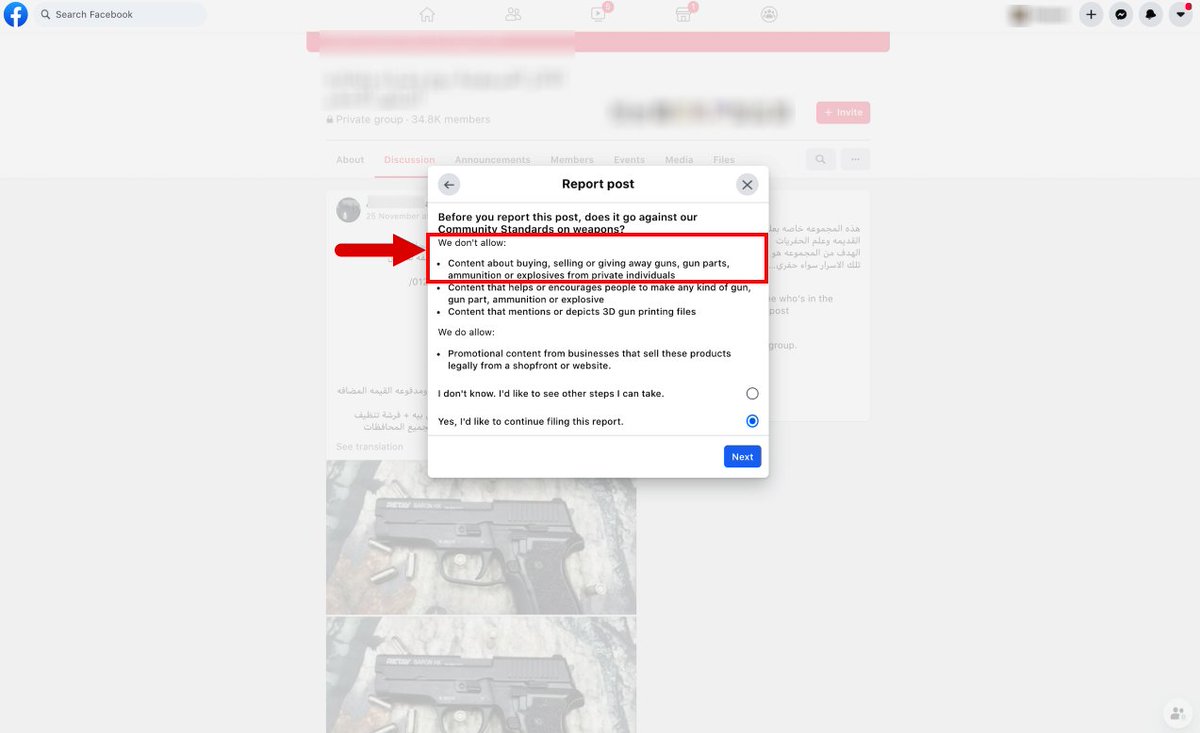

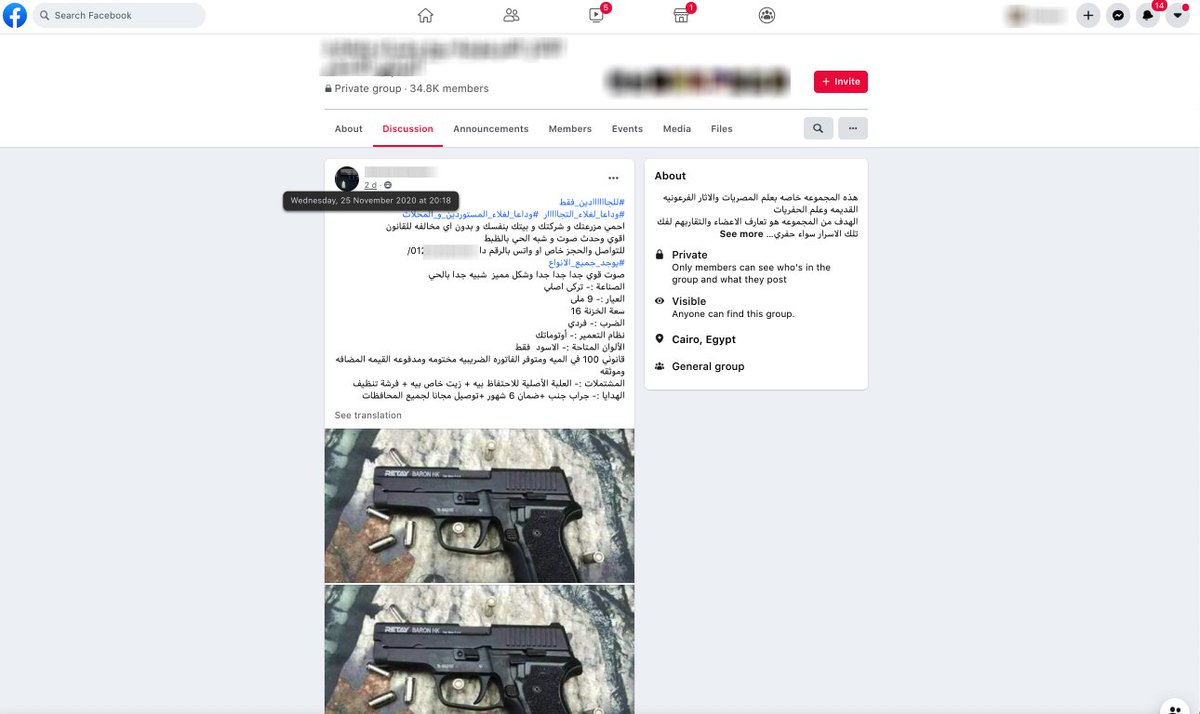

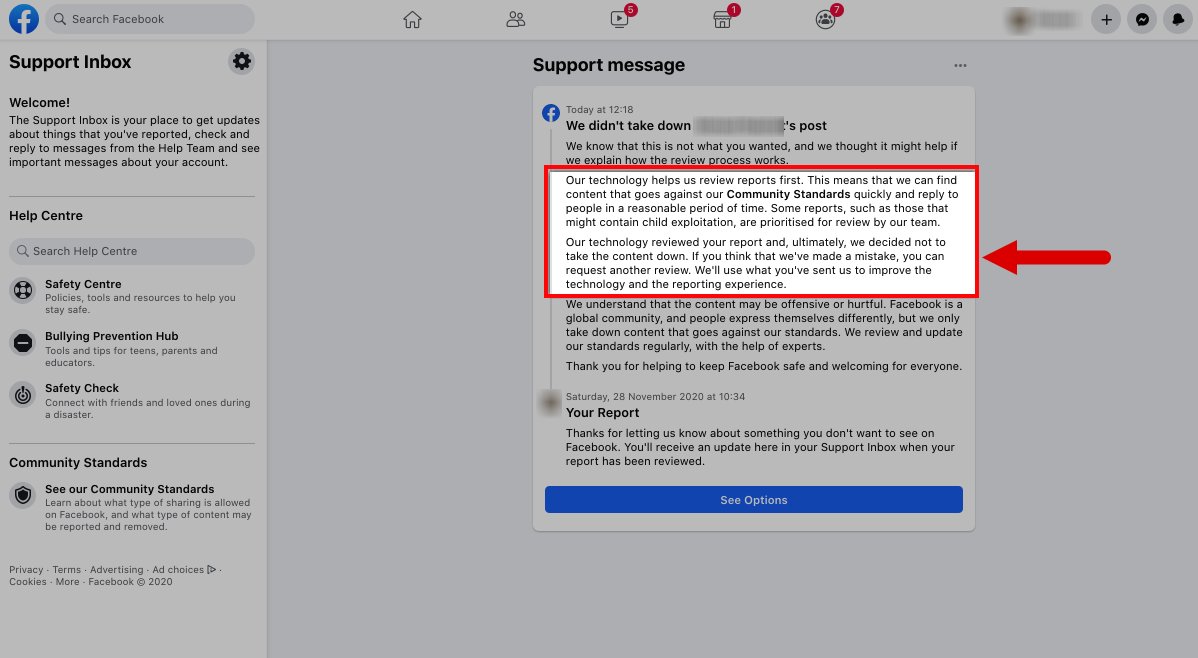

Today, we got back Facebook's ruling on this post that explicitly offered guns for sale (with delivery!)

Facebook said that its AI responsible for identifying the post found it not to be in violation and it would not be removed.

Facebook said that its AI responsible for identifying the post found it not to be in violation and it would not be removed.

Facebook has made a major shift to AI, claiming it is helping moderators ID content that needs review.

But the weapons reporting in this trafficking Facebook group by ATHAR are the clearest example yet that the company's AI is not as good as claimed.

gadgets.ndtv.com/social-network…

But the weapons reporting in this trafficking Facebook group by ATHAR are the clearest example yet that the company's AI is not as good as claimed.

gadgets.ndtv.com/social-network…

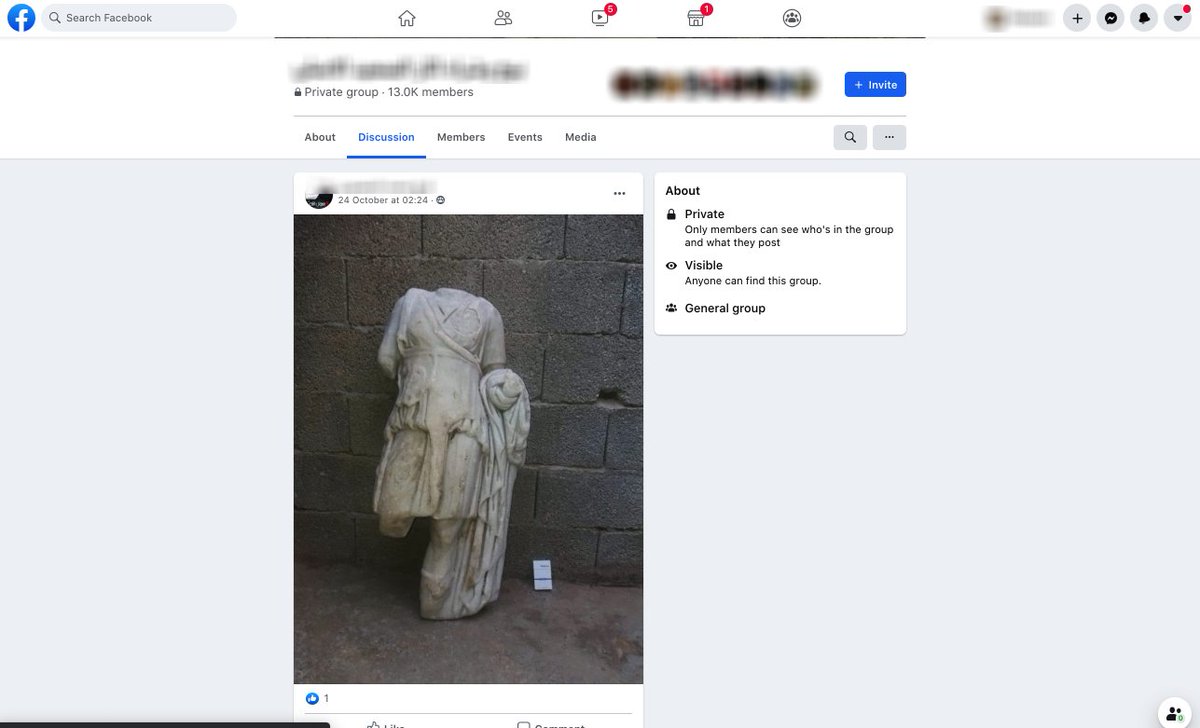

Facebook's AI is apparently unable to ID a reported post that is explicitly offering guns for sale. The only images in the post are a close-up of a gun and bullets.

Facebook appears to have been grossly overselling its AI abilities to Congress, the public, and its investors.

Facebook appears to have been grossly overselling its AI abilities to Congress, the public, and its investors.

Remember when Facebook used the "broccoli or marijuana" challenge to highlight how great its AI was at identifying images?

That's great for broccoli & marijuana, but apparently it doesn't work so well for clear gun photos.

@businessinsider @Hamilbug businessinsider.com/facebook-used-…

That's great for broccoli & marijuana, but apparently it doesn't work so well for clear gun photos.

@businessinsider @Hamilbug businessinsider.com/facebook-used-…

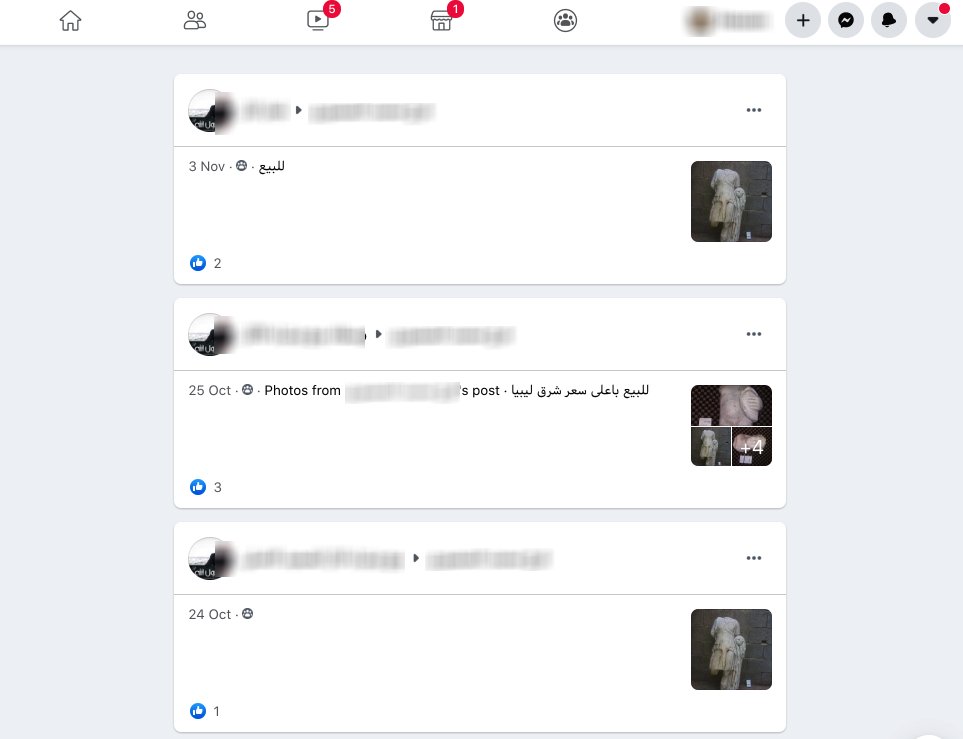

Aside from the image—which is a gun that FB's moderation AI apparently couldn't catch— there's the text of the post which is offering weapons and noting that they can be delivered to any governorate in Egypt.

But the text is in Arabic... images aren't FB's only AI blindspot.

But the text is in Arabic... images aren't FB's only AI blindspot.

Earlier this month, @marcowenjones pointed out that there is a blind spot for content in Arabic.

It's not just Arabic, our research on Facebook trafficking found blind spots for any language that does not involve Latin script (even then it isn't great)

It's not just Arabic, our research on Facebook trafficking found blind spots for any language that does not involve Latin script (even then it isn't great)

https://twitter.com/GSECForum/status/1329074409563308034

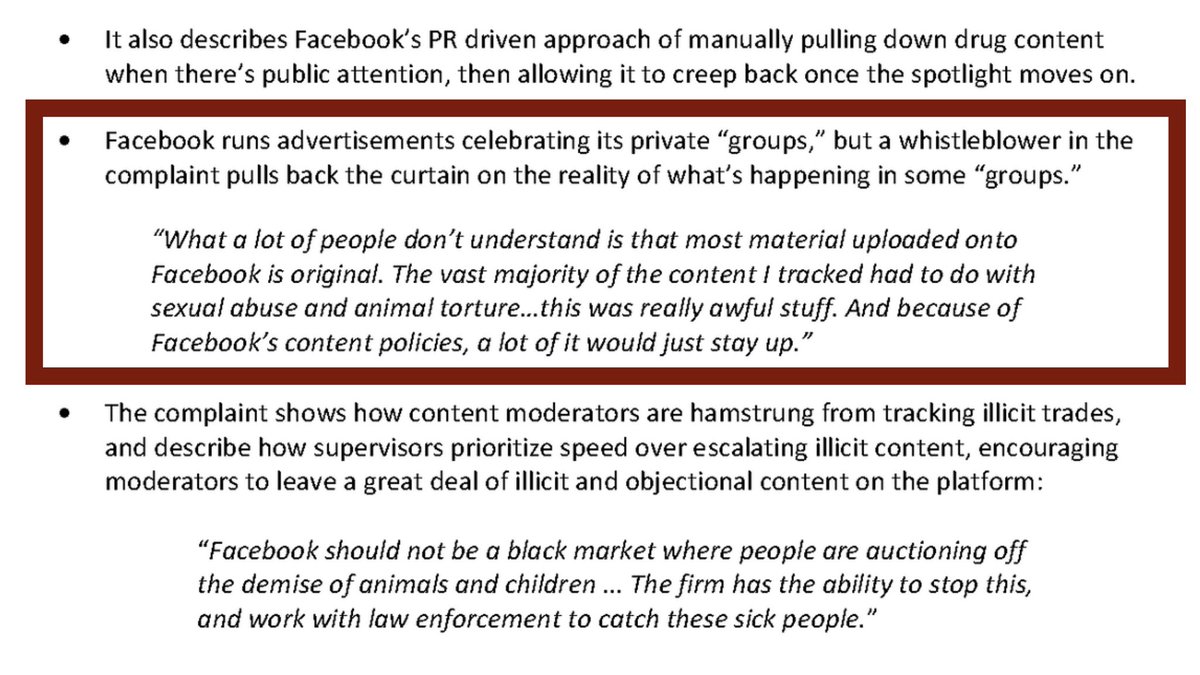

Facebook has failed to act when faced with rampant crime.

It has left its users, the public, & its investors at risk.

That’s why we joined @_CINTOC @CounteringCrime to file a SEC whistleblower case on the ongoing harm caused by crime in Facebook groups

counteringcrime.org/press-release

It has left its users, the public, & its investors at risk.

That’s why we joined @_CINTOC @CounteringCrime to file a SEC whistleblower case on the ongoing harm caused by crime in Facebook groups

counteringcrime.org/press-release

• • •

Missing some Tweet in this thread? You can try to

force a refresh