The word "government" is often more obfuscating than enlightening.

It establishes (or regenerates) the inertia of a top-down phenomenological frame, divorced from its processes of emergence.

Let's explore what the concept of "government" means, from an emergent perspective...

It establishes (or regenerates) the inertia of a top-down phenomenological frame, divorced from its processes of emergence.

Let's explore what the concept of "government" means, from an emergent perspective...

We begin with the simple fact that we are not each other.

This observation warrants unpacking: we each represent an exploratory tendril of our biological species, but are physically incarnated such that our perspectives *never* fully align.

This holds even for conjoined twins.

This observation warrants unpacking: we each represent an exploratory tendril of our biological species, but are physically incarnated such that our perspectives *never* fully align.

This holds even for conjoined twins.

Now, let's assume that we may measure this "experiential delta", labeling it ΔE (more later on its potential formal representation).

We may then ask how ΔE relates to:

- The "natural inertia" of an individuated being

- The unique information obtained along an individuated path

We may then ask how ΔE relates to:

- The "natural inertia" of an individuated being

- The unique information obtained along an individuated path

When it comes to "natural inertia" (NI), most reasonable people can agree that we at the very least share some overlapping domain of experience due to our shared evolutionary history.

To argue that...

To argue that...

...each person's eyes produce an entirely different subjective experience is a fun thought experiment to run when high w/ friends, but our everyday behavior belies this notion.

So let's grant that we possess shared capacities and tendencies due to our shared evolutionary paths.

So let's grant that we possess shared capacities and tendencies due to our shared evolutionary paths.

But per our initial axiom, we must grant that these tendencies and capacities are forced to remain separated, as measured by ΔE.

Of course, it should be clear that this is largely a reframing of the nature / nurture tension written in terms of more fundamental axioms.

Of course, it should be clear that this is largely a reframing of the nature / nurture tension written in terms of more fundamental axioms.

In this new grammar, we may more easily talk about what happens when the magnitude of ΔE varies locally between individuals, or at larger social scales.

But to do so we must consider the basic possibilities of inter-agent interactions, per ΔE.

But to do so we must consider the basic possibilities of inter-agent interactions, per ΔE.

In cases where NI and ΔE are low, we're likely to see a kind of "alignment" or "coherence" emerge in relation to the agents involved.

In other words, if we share a bio-platform and our life paths overlap significantly, this prunes down our possibilities for conflict.

However...

In other words, if we share a bio-platform and our life paths overlap significantly, this prunes down our possibilities for conflict.

However...

ΔE is a fractal (scale-specific) metric. That is to say, even within ostensibly shared spaces that globally "minimize" ΔE, the local divergence can just as readily generate destabilizing frustration.

i.e.: Brothers fight, and often with more fervor than strangers.

i.e.: Brothers fight, and often with more fervor than strangers.

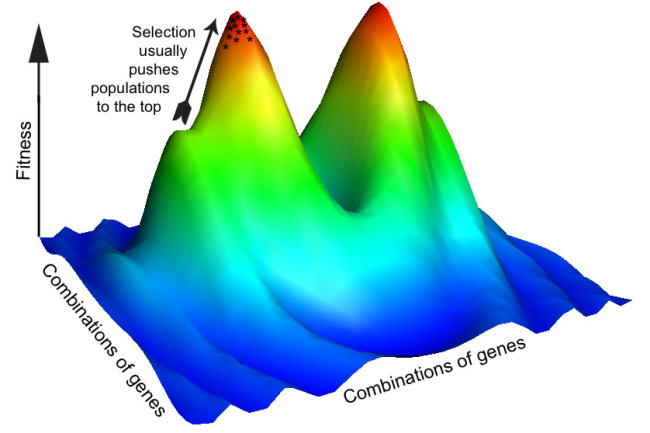

So the problem becomes complicated by the need to stabilize interactions between NI and ΔE fractally, given no family, community, or nation will persist if its members constantly expend energy fighting, and thus insufficiently attend to the adaptive pressures induced by entropy.

Let's call our evolved intuitions in relation to this logic humanity's "peaceful attractor" (PA).

We "orbit" the PA when our ΔE remains within some threshold that satisfies the following criterion:

- NI*ΔE at a given scale captures adaptively sufficient levels of information...

We "orbit" the PA when our ΔE remains within some threshold that satisfies the following criterion:

- NI*ΔE at a given scale captures adaptively sufficient levels of information...

- NI*ΔE does not exceed some critical threshold at which a "decoherence event" (DE) occurs.

(To fully appreciate such DEs, you must understand the relationship between Julia sets and the Mandelbrot set, as well as the concept of connectedness)

(To fully appreciate such DEs, you must understand the relationship between Julia sets and the Mandelbrot set, as well as the concept of connectedness)

These two criterion are related, respectively, with the information-theoretic concepts of error and complexity catastrophes, as lower and upper bounds on the dynamics of our fractally nested adaptive walks.

At each scale our behavior must remain within these bounding parameters.

At each scale our behavior must remain within these bounding parameters.

And that's why–at each scale–forms of collective mediation emerge to navigate the conflicts introduced by the absolute ineradicability of ΔE.

*It doesn't matter what you call these forms. They are a guaranteed emergent property of the scale-specific tensions induced by ΔE.*

*It doesn't matter what you call these forms. They are a guaranteed emergent property of the scale-specific tensions induced by ΔE.*

And herein lies the issue with which we began. The fact that we label one scale of this fractally emergent tendency "government" blinds us to the reality that so long as we retain individuated agency mediated by NI interactions, scale-specific "governance" *will* emerge.

It will emerge because absent such mechanisms, no coherent structure at a given scale of complexity can persist. It will either fail to regenerate, or decohere via conflict over incommensurate scale-specific perceptions.

This is the blindspot of the "localism" movement.

This is the blindspot of the "localism" movement.

Thus I've argued that we're nowhere close to comprehending how to navigate our present degree of emergent complexity at scale.

Without a decent metric with which to observe the scale-specific stability and adaptive capacity of multi-scale systems, we're basically flying blind.

Without a decent metric with which to observe the scale-specific stability and adaptive capacity of multi-scale systems, we're basically flying blind.

We don't know when it's appropriate to allow for the emergence of a novel layer of global integration, or when we must dissolve institutions of presently centralized control.

So we take stances that assume the sustainability of these ideas' mutual exclusivity, however unlikely.

So we take stances that assume the sustainability of these ideas' mutual exclusivity, however unlikely.

I do, however, believe such tools are possible.

My bet is on tools capable of tracking our "fractal momentum", which could in theory give us the ability to speak coherently about how likely we are to run afoul of the aforementioned bounding parameters and induce decoherence.

My bet is on tools capable of tracking our "fractal momentum", which could in theory give us the ability to speak coherently about how likely we are to run afoul of the aforementioned bounding parameters and induce decoherence.

As for our ability to responsibly manage such tools without abusing the information they produce for purposes either of authoritarian over-centralization or needless fragmentation, it's an open question as to the degree we're fundamentally constrained by our species-scale NI.

And it's this question that animates the deeper philosophical debate concerning whether we are–or will ever become–capable of consciously stewarding that NI away from our "fundamental fallenness", or "original sin".

Either way, you can rest assured it will involve "government".

Either way, you can rest assured it will involve "government".

Errata: "In cases with NI high and ΔE low"

• • •

Missing some Tweet in this thread? You can try to

force a refresh