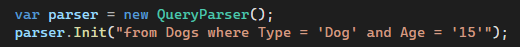

1/ The good thing about putting this stuff in the open is that there are a lot of people with the right background looking at the data. In here I will highlight the work (and method) @AlbertoAut did on trying to get a more precise estimation of the Persons/day metric.

https://twitter.com/federicolois/status/1415004781907742726

2/ The problem as originally presented on the thread is that we need to know how the incidence changes when we include the left out estimated deaths. I used a simple approach which was using the mean for the cohort.

https://twitter.com/federicolois/status/1415004773468807178

3/ Here we got to a surprise, I though that I was overestimating it (the mean was over the median). Well, as I will showcase here how @AlbertoAut shows that the mean was actually below it. Not impossible, but surprising.

https://twitter.com/federicolois/status/1415004777688313857

4/ What he did was pretty clever, we dont have the details but we can still use the information that it is available to us from other sources. He found the daily doses that were distributed, I have seen that in Argentina too (more on that in a later thread).

5/ From there you can actually estimate during that period the total days people has passed knowing how many people has been contributing to each cohort.

6/ Then you need to adjust based on population statistics, persons in the study, you know the whole deal. He then sent me the results and were actually very close to the ones published in the papers. Interesting. The plot thickens.

7/ We put ourselves to work to understand why. At some point we figured it out, in the same way I was underestimating, he was overestimating. Why? Because you have to ask yourself what happens when someone has been infected. They are removed from the study (they become an event).

8/ So we had to look for the deaths, the infected, adjust based on the probability of the total events... you know the drill. After we did all that the outcome in @AlbertoAut words was: "Answer moved towards your end of the estimate but still closer to my upper end."

9/ Plugging in a more accurate estimation into the model shows that there is no change in the general results. Unvaccinated cohort keeps beating the other two. And the 1 event one is still 3 times worse. So no change there. Kudos to @AlbertoAut work!!! An example to follow.

• • •

Missing some Tweet in this thread? You can try to

force a refresh