"Instead of leaving such work to volunteers, global institutions should marshal the funding & expertise to collect crucial data, & mandate their publication"

💯agree with @_HannahRitchie. No one wants to fund the giant who's shoulders we stand on.

1/

nature.com/articles/d4158…

💯agree with @_HannahRitchie. No one wants to fund the giant who's shoulders we stand on.

1/

nature.com/articles/d4158…

The approach to science is to fund big models, expensive observations, etc. All this is needed, but somehow science seems to have forgotten the importance careful curation & maintenance of data.

2/

2/

Science is full of projects that improve models, do model comparisons, process some satellite data, etc, & if you are lucky there might be a task that scrapes together some data to feed the models.

3/

3/

A nice example is Norway (coincidentally) spotted two errors in its emissions data, to 2% of its national total. This did not require modelling or satellites, just someone checking consistency in data sources... Very cheap, but undervalued.

4/

4/

Many may just naively download some data, assuming it is correct. But, emission statistics are a tricky beast. Some datasets are just wrong (for explainable reasons).

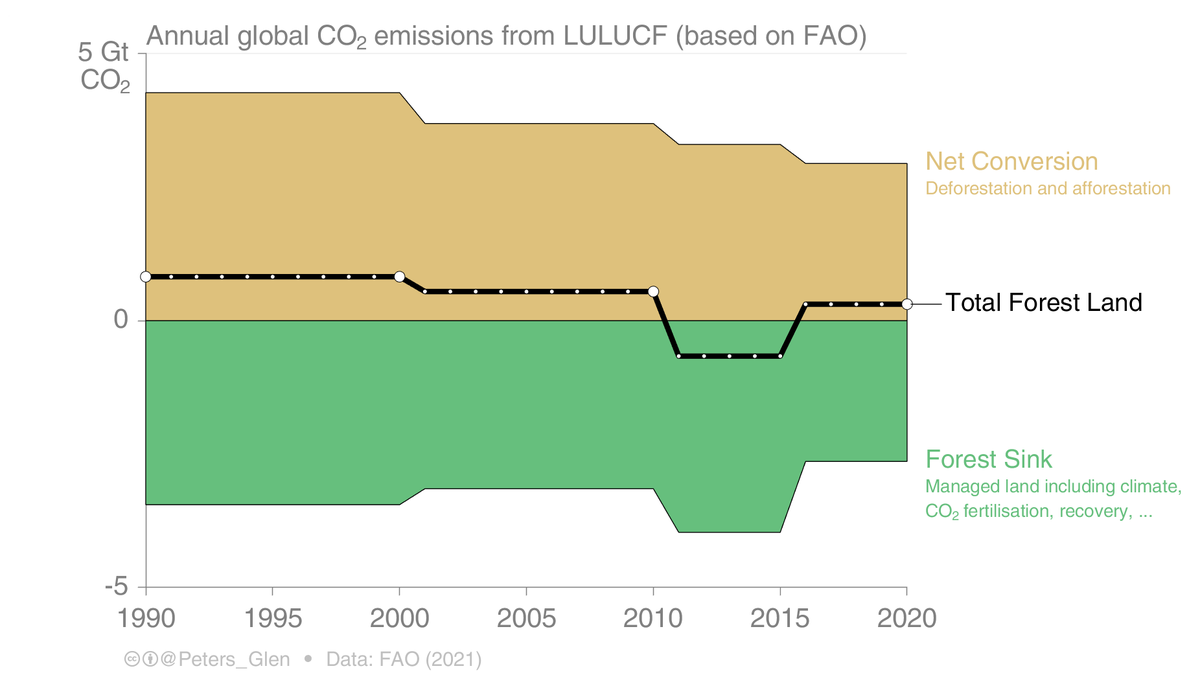

Eg, some have linked the drop in the blue curve to the Norwegian carbon price (no, it is a data problem).

5/

Eg, some have linked the drop in the blue curve to the Norwegian carbon price (no, it is a data problem).

5/

These two examples are from Norway, one of the richest countries in the world with a well funded statistical agency.

Start looking at under resourced countries, or CH4 or N2O emissions... Fgas emissions, well, there is another story.

6/

Start looking at under resourced countries, or CH4 or N2O emissions... Fgas emissions, well, there is another story.

6/

Climate is supposedly one of the greatest challenges facing society, but we essentially have a crisis in basic energy & emission statistics to feed expensive climate models, to independently track progress under the Paris Agreement, etc.

7/

7/

As @_HannahRitchie explains, the @IEA has an incredible source of energy & CO2 emissions data, but it is expensive with strict licencing.

Some compile GHG statistics (EDGAR, CEDS, PRIMAP), but datasets vary in duration, independence, frequency, etc.

essd.copernicus.org/articles/12/14…

8/

Some compile GHG statistics (EDGAR, CEDS, PRIMAP), but datasets vary in duration, independence, frequency, etc.

essd.copernicus.org/articles/12/14…

8/

The work we do in @gcarbonproject has *zero* direct funding, we have to align with other project activities to cover costs. Yet, we persist in updating annually.

These seem to be standard issues for anyone working on data.

9/

These seem to be standard issues for anyone working on data.

9/

I think the issues go beyond institutional. The research community (funders, researchers, reviewers) has something to answer here too. It is a matter of what to prioritise as important to fund, to write into successful proposals, to be evaluate as "innovative", ...

10/10

10/10

• • •

Missing some Tweet in this thread? You can try to

force a refresh