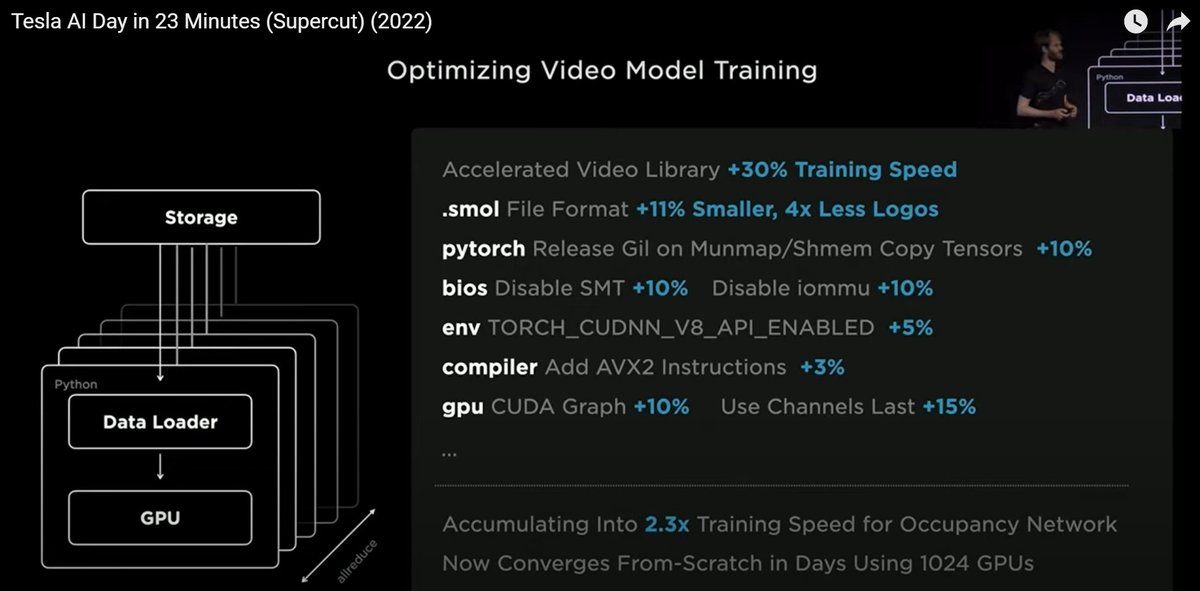

On #biocompute news, $TSLA Tesla AI day gave some technical details on how they do their training and video labelling. It seems $NVDA Nvidia GPUs are the norm, with a 14,000 HPC heavily optimised on the software side. #pytorch #AVX2 #CUDA #SMT #smol #CUDNN

None of this is for the purpose of #Bioinformatics applications, rather here it's for Full Self-Driving software, but the technical details show some choices for high-throughput #AI training that one could compare to the #ComputationalBiology #ComputeAcceleration world.

More at bit.ly/biocompute

Now even though $TSLA Tesla continues to use $NVDA Nvidia for their #AI training, they gave an update of their own #Computing platform, #DOJO. Difficult to say from the cost comparison slide if 1 Dojo tile is worth 6x GPU boxes (each 8x A100?) but they say it's on par.

At the moment it's work a bit more than an $NVDA Nvidia A100 GPU, the bar in the middle is faster due to faster VRAM, and the last one is a projection of hardware and software improvements. No numbers on wattage.

The Exapod cabinet demo shows I believe one Dojo tile per case, sort of the size of an Apple Mini, so only 4 in the picture, then the below compute I presume is the CPUs / hard drives / network? If someone has more insight, do comment below please.

Again, nothing shown here directly relates to #Bioinformatics #ComputeAcceleration, but it shows the "Nvidia A100 as a work-horse of #AI" trend, and also the fact that people at Tesla believe they need their own silicon to move forward with freedom to operate from other people.

Looking forward to hear more from Dojo and how it compares to Nvidia. The thread is currently open to all for comments (so be nice and behave).

A final comment is that from the Exapod cabinet demo it looks like the Dojo die is quite large. I am not sure what it means when they say they want to produce 7 Exapods in the next 1-2 years, but I presume it means they haven't considered at all opening up Dojo to the world.

There is also the software side to this: it's all well and good to write software for a new #ComputeAcceleration silicon *within* a company, but if they ever wanted wide software adoption, they would have to open it up to the outside world in software terms as well

which I believe it to be very unlikely. I have posted before about other #Acceleration computing platforms, such as @CerebrasSystems and @graphcoreai , but their adoption rate compared to Nvidia GPUs is still minuscule. Hopefully there is enough software, and enough of it

is nicely integrated into #Linux #Tensorflow and other #OpenSource libraries that we see a rich #ComputeAcceleration ecosystem come out of this wave of #AI initiatives.

• • •

Missing some Tweet in this thread? You can try to

force a refresh