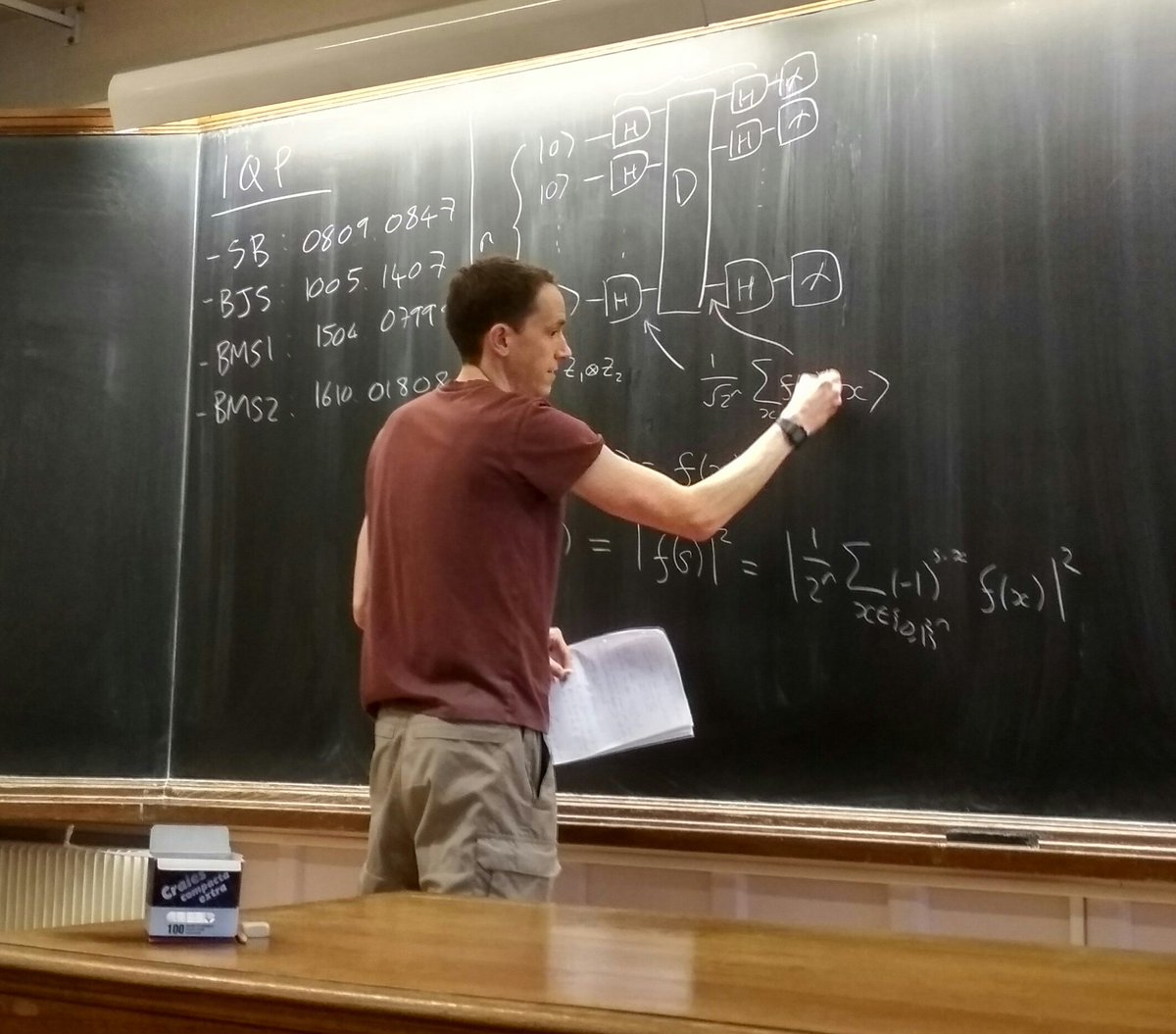

- Shepherd, Bremner 0809.0847;

- Bremner, Jozsa, Shepherd 1005.1407;

- Bremner, Montanaro, Shepherd 1504:0799;

- Bremner, Montanaro, Shepherd 1610:01808;

arxiv.org/abs/1005.1407

cstheory.com/stockmeyer@sbc…

1. Stockmeyer's Theorem => If a "good" additive-error classical sampler exists then a BPP^NP algorithm can approximate output probabilities with 1/poly(n) additive error. This is Good but not there Yet.

- The Paley Zygmund inequality: shows that the probability of p(s) being larger than 1/2^n is proportional to 2^{2n} /(expected value of p(s)^2).

- A combinatorial proof that E(p(s)^2) ≥ 1/2^n

=> Final constant anti-concentration ratio.

arxiv.org/abs/1610.01808 #LTQI

- Gao, Wang, Duan: arxiv.org/abs/1607.04947

- Bermejo-Vega, Hangleiter, Schwarz, Raussendorf, Eisert: arxiv.org/abs/1703.00466

+