#OSINT Workflow Wednesday

This week we’ll discuss how to find date/time information of web content even if it’s not obvious. This will help you establish a timeline of content or determine if an article has been altered since the original publication.

Let’s get started.

(1/8)

This week we’ll discuss how to find date/time information of web content even if it’s not obvious. This will help you establish a timeline of content or determine if an article has been altered since the original publication.

Let’s get started.

(1/8)

Step 1: Check the URL

This is a no brainer, but a lot of web content will include the original date it was published in the URL. Keep in mind that this could be an updated URL. We’ll look at other data to determine that next.

(2/8)

This is a no brainer, but a lot of web content will include the original date it was published in the URL. Keep in mind that this could be an updated URL. We’ll look at other data to determine that next.

(2/8)

Step 2: Check the Sitemap

Simply add “/sitemap.xml” to the end of a URL to check if a sitemap is available. The sitemap usually includes the date/time stamp of when all content was updated on the website. This is great for finding different URL types on a website too!

(3/8)

Simply add “/sitemap.xml” to the end of a URL to check if a sitemap is available. The sitemap usually includes the date/time stamp of when all content was updated on the website. This is great for finding different URL types on a website too!

(3/8)

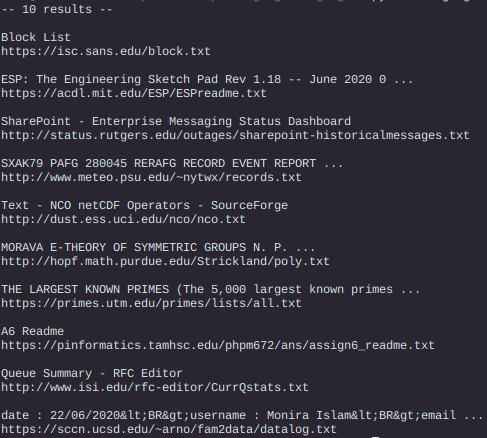

Step 3: Check Google

If you copy the URL you’re on and paste it after inurl: in Google, the date of publication will sometimes be revealed. This is a good way of testing across multiple domains if your investigation is broader.

(4/8)

If you copy the URL you’re on and paste it after inurl: in Google, the date of publication will sometimes be revealed. This is a good way of testing across multiple domains if your investigation is broader.

(4/8)

Step 4: Check Social Media

If you copy and paste the URL into Twitter, for example, and look for the oldest Tweet, you’ll likely get an idea of how old that post is. If you don’t get any results for the URL, try the article title instead.

(5/8)

If you copy and paste the URL into Twitter, for example, and look for the oldest Tweet, you’ll likely get an idea of how old that post is. If you don’t get any results for the URL, try the article title instead.

(5/8)

Step 5: Check the Images

If all methods have failed so far, right-click and inspect the images on the page. Often, websites include the date of the images as part of the name for the file. If you see an earlier date on the images than you do in the URL it might be altered

(6/8)

If all methods have failed so far, right-click and inspect the images on the page. Often, websites include the date of the images as part of the name for the file. If you see an earlier date on the images than you do in the URL it might be altered

(6/8)

Step 6: Check the Comments

Look for the oldest comment on the page if comments are available. While this won’t give you an exact publication date/time, it’ll give you a pretty good idea of how old the article is.

(7/8)

Look for the oldest comment on the page if comments are available. While this won’t give you an exact publication date/time, it’ll give you a pretty good idea of how old the article is.

(7/8)

Step 7: Check the Archives

Using a tool like Web Archives, you can quickly check to see if the page you’re looking at has been archived. If you check for the earliest archive date, you can use it to determine a date range or find discrepancies.

github.com/dessant/web-ar…

(8/8)

Using a tool like Web Archives, you can quickly check to see if the page you’re looking at has been archived. If you check for the earliest archive date, you can use it to determine a date range or find discrepancies.

github.com/dessant/web-ar…

(8/8)

• • •

Missing some Tweet in this thread? You can try to

force a refresh