Machine Learning Weekly Highlights 💡

Made of:

◆2 things from me

◆2 from other creators

◆2+1 from the community

A thread 🧵

Made of:

◆2 things from me

◆2 from other creators

◆2+1 from the community

A thread 🧵

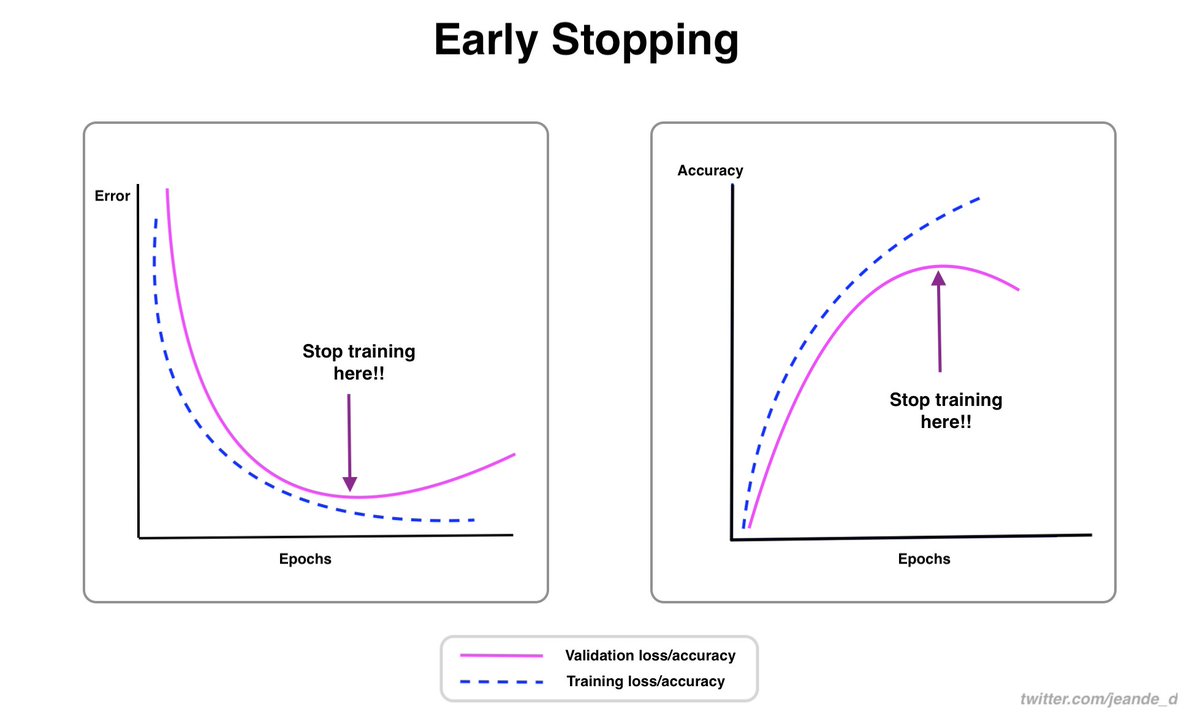

This week, I wrote about activation functions and why they are important components of neural networks.

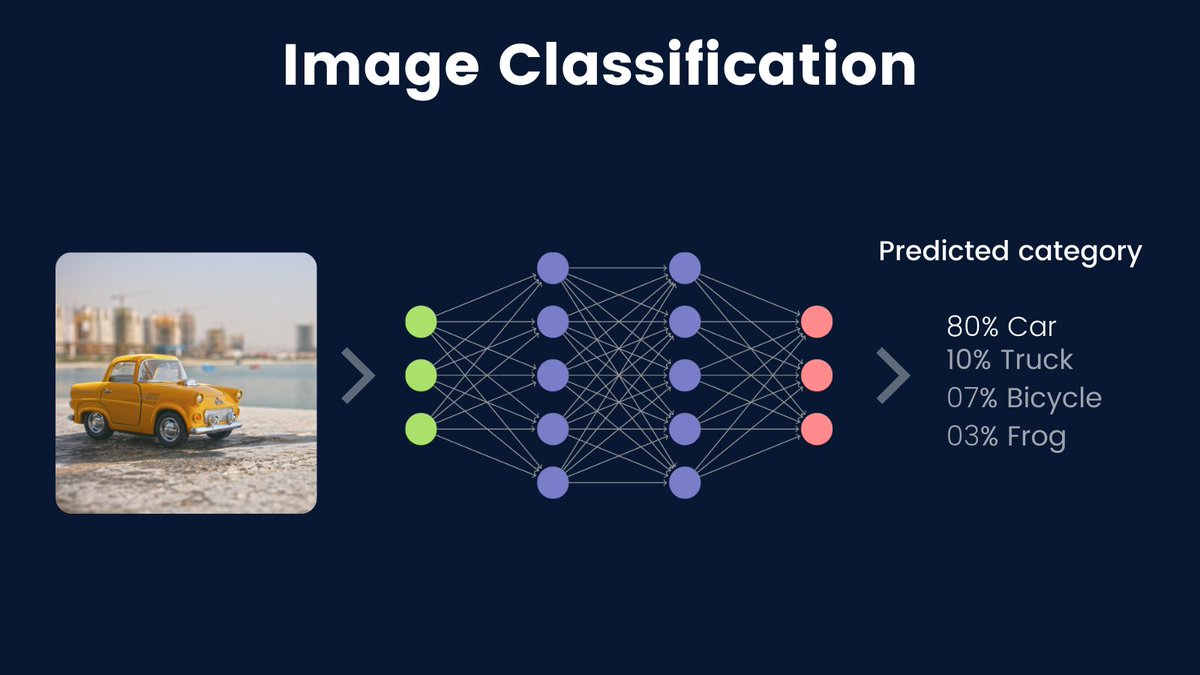

Yesterday, I also wrote about image classification, one of the most important computer vision tasks.

Yesterday, I also wrote about image classification, one of the most important computer vision tasks.

#1

Here is the thread about activation functions

Here is the thread about activation functions

https://twitter.com/Jeande_d/status/1460963761284517896?s=20

#2

Here is the thread about image classification.

Here is the thread about image classification.

https://twitter.com/Jeande_d/status/1462040682437120001?s=20

From Other Creators

#1

A Review of latest cool papers

@omarsar0 made a great summary about the papers that he recently shared such as a survey of visual transformers, video object segmentation, neural rendering, Graph Neural Networks(GNNs), etc...

#1

A Review of latest cool papers

@omarsar0 made a great summary about the papers that he recently shared such as a survey of visual transformers, video object segmentation, neural rendering, Graph Neural Networks(GNNs), etc...

https://twitter.com/omarsar0/status/1459524719909163010?s=20

#2

Thinking in cycles

@RodOrtJose shared five great tips on doing machine learning the right way.

Thinking in cycles

@RodOrtJose shared five great tips on doing machine learning the right way.

https://twitter.com/RodOrtJose/status/1459891380562497541?s=20

From the Community

#1

TensorFlow released a TensorFlow GNN, a new Graph Neural Network library that has Keras API component.

blog.tensorflow.org/2021/11/introd…

#1

TensorFlow released a TensorFlow GNN, a new Graph Neural Network library that has Keras API component.

blog.tensorflow.org/2021/11/introd…

I am interested in learning about Geometric Deep Learning. In the near future, I will learn about it and will share it with you along the way...

#2

OpenAI made it easy to get access to GPT-3. In a matter of seconds, I just got this massive language model. If I get time in the coming weeks, I will explore it.

openai.com/blog/api-no-wa…

OpenAI made it easy to get access to GPT-3. In a matter of seconds, I just got this massive language model. If I get time in the coming weeks, I will explore it.

openai.com/blog/api-no-wa…

#3

NYU Deep Learning course(by ylecun & @alfcnz) materials are out.

cds.nyu.edu/deep-learning/

NYU Deep Learning course(by ylecun & @alfcnz) materials are out.

cds.nyu.edu/deep-learning/

https://twitter.com/TheSequenceAI/status/1459619238839296002?s=20

That's it from this week.

I would like to keep doing weekly highlights to share things that I found helpful over the whole week. For deep dives, I am starting a newsletter in next month.

Let me know if the highlights help you catch up with what's happened in the community.

I would like to keep doing weekly highlights to share things that I found helpful over the whole week. For deep dives, I am starting a newsletter in next month.

Let me know if the highlights help you catch up with what's happened in the community.

Until the next week, stay safe!

And make sure you follow @Jeande_d for more machine learning ideas and the latest news.

And make sure you follow @Jeande_d for more machine learning ideas and the latest news.

• • •

Missing some Tweet in this thread? You can try to

force a refresh