Machine Learning Weekly HighLights 💡

Made of:

◆3 things from me

◆2 from from others and

◆1 from the community

Made of:

◆3 things from me

◆2 from from others and

◆1 from the community

This week, I explored different object detection libraries, wrote about the hyper-parameter optimization methods, and updated the introduction to machine learning in my complete ML packaged free online book.

I also reached 6000 followers 🎉. Thank you for your support again!

I also reached 6000 followers 🎉. Thank you for your support again!

#2

Different hyper-parameter optimization techniques

Different hyper-parameter optimization techniques

https://twitter.com/Jeande_d/status/1463514411922903050?s=20

#3

And lastly, here are the updates about the complete ML online free book:

And lastly, here are the updates about the complete ML online free book:

https://twitter.com/Jeande_d/status/1463858056123404291?s=20

2 things from other people

#1 Machine Learning BootCamp

@Al_Grigor has been teaching a free great machine learning course based on his book.

The course materials are available at

github.com/alexeygrigorev…

#1 Machine Learning BootCamp

@Al_Grigor has been teaching a free great machine learning course based on his book.

The course materials are available at

github.com/alexeygrigorev…

#2 Applying ML

@eugeneyan made a great collections of resources on how to apply machine learning: curated papers, blogs, and interviews.

Great collection, Eugene. Like you mentioned, there's a gap between knowing ML vs applying it. Thus, this is 💯💯

applyingml.com

@eugeneyan made a great collections of resources on how to apply machine learning: curated papers, blogs, and interviews.

Great collection, Eugene. Like you mentioned, there's a gap between knowing ML vs applying it. Thus, this is 💯💯

applyingml.com

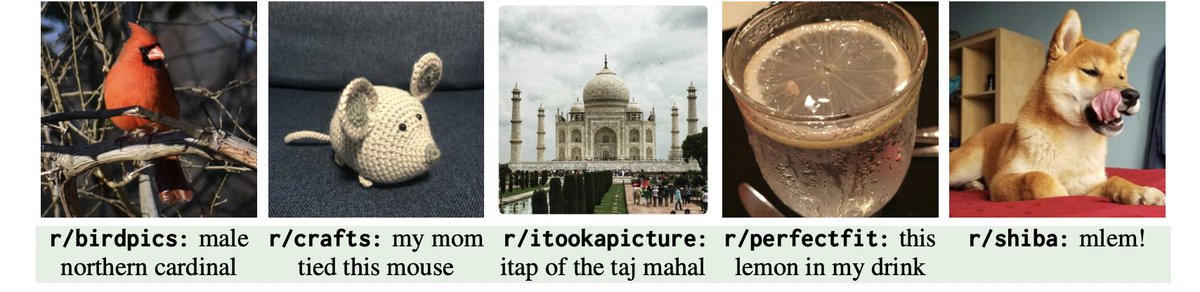

1 from the community: RedCaps

RedCaps is a new largest image-text pair dataset that contains 12M image-text pairs, collected from Reddit.

RedCaps is a new largest image-text pair dataset that contains 12M image-text pairs, collected from Reddit.

RedCaps dataset can be useful for vision and language tasks such as image captioning and visual question answering.

Paper: arxiv.org/abs/2111.11431

Page: https://t.co/V0CW1xJC2W

RedCaps was collected by @jcjohnss, @kdexd, @gauravkaul7 and @zubinaysola

Paper: arxiv.org/abs/2111.11431

Page: https://t.co/V0CW1xJC2W

RedCaps was collected by @jcjohnss, @kdexd, @gauravkaul7 and @zubinaysola

There is a demo of the dataset trained on VirTex(Learning Visual Representations from Textual Annotations) model that you can try on @huggingface.

I tried it for my own images and it seems to work well.

huggingface.co/spaces/umichVi…

I tried it for my own images and it seems to work well.

huggingface.co/spaces/umichVi…

That's it from this week.

A summary of the highlights:

◆Object detection libraries

◆Hyper-parameter optimization methods

◆Updates on Intro to ML in my ML

Complete packaged online free book

◆Alexey ML BootCamp

◆Eugene MLOps resources

◆RedCaps image-text pair dataset

A summary of the highlights:

◆Object detection libraries

◆Hyper-parameter optimization methods

◆Updates on Intro to ML in my ML

Complete packaged online free book

◆Alexey ML BootCamp

◆Eugene MLOps resources

◆RedCaps image-text pair dataset

Thanks for reading.

You can retweet or share these highlights to anyone who you thing might benefit from reading them.

Follow @Jeande_d for more ML contents.

Until the next week, stay safe!

You can retweet or share these highlights to anyone who you thing might benefit from reading them.

Follow @Jeande_d for more ML contents.

Until the next week, stay safe!

• • •

Missing some Tweet in this thread? You can try to

force a refresh