This is sweet 🥧 !

arxiv.org/abs/2202.01197

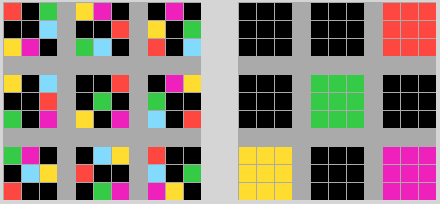

Finally a solid way of of teaching a neural network to know what it does not know.

(OOD = Out Of Domain, i.e. not one of the classes in the training data.) Congrats @SharonYixuanLin @xuefeng_du @MuCai7

arxiv.org/abs/2202.01197

Finally a solid way of of teaching a neural network to know what it does not know.

(OOD = Out Of Domain, i.e. not one of the classes in the training data.) Congrats @SharonYixuanLin @xuefeng_du @MuCai7

The nice part is that it's a purely architectural change of the detection network, with a new contrastive loss which does not introduce additional hyper-parameters. No additional data required !

The results are competitive with training on a larger dataset manually extended with outliers: "Our method achieves OOD detection performance on COCO (AUROC: 88.66%) that favorably matches outlier exposure (AUROC: 90.18%), and does not require external data."

The trick is to generate OOD examples automatically for the contrastive loss. But instead of doing it with a GAN, which is hard and flaky, they do it directly in the final feature space, just before the classification head.

Looks like this paper is going to the stratosphere. #1 on Arxiv now. Congratulations @SharonYixuanLi, @xuefeng_du, @MuCai7.

• • •

Missing some Tweet in this thread? You can try to

force a refresh