#OSINT Tool Tuesday 🚨

Another week, another set of tools. This week let's look at Snapchat, Google Earth, and YouTube today. This will include 2 #python tools and 1 web app. Shall we?

(1/5)

Another week, another set of tools. This week let's look at Snapchat, Google Earth, and YouTube today. This will include 2 #python tools and 1 web app. Shall we?

(1/5)

The first #OSINT tool is made by @djnemec and it's a #python tool called Snapchat Story Downloader. It allows you to create a db of locations of interest then extract Snapchat stories from those locations indefinitely. Classifier too. Great!

github.com/nemec/snapchat…

(2/5)

github.com/nemec/snapchat…

(2/5)

The second #OSINT tool is a #python tool I made in response to @raymserrato who was looking to automate screenshot capturing of Google Earth. Earthshot will open and screenshot a list of coordinates you specify on a CSV. It's slow though!

github.com/jakecreps/eart…

(3/5)

github.com/jakecreps/eart…

(3/5)

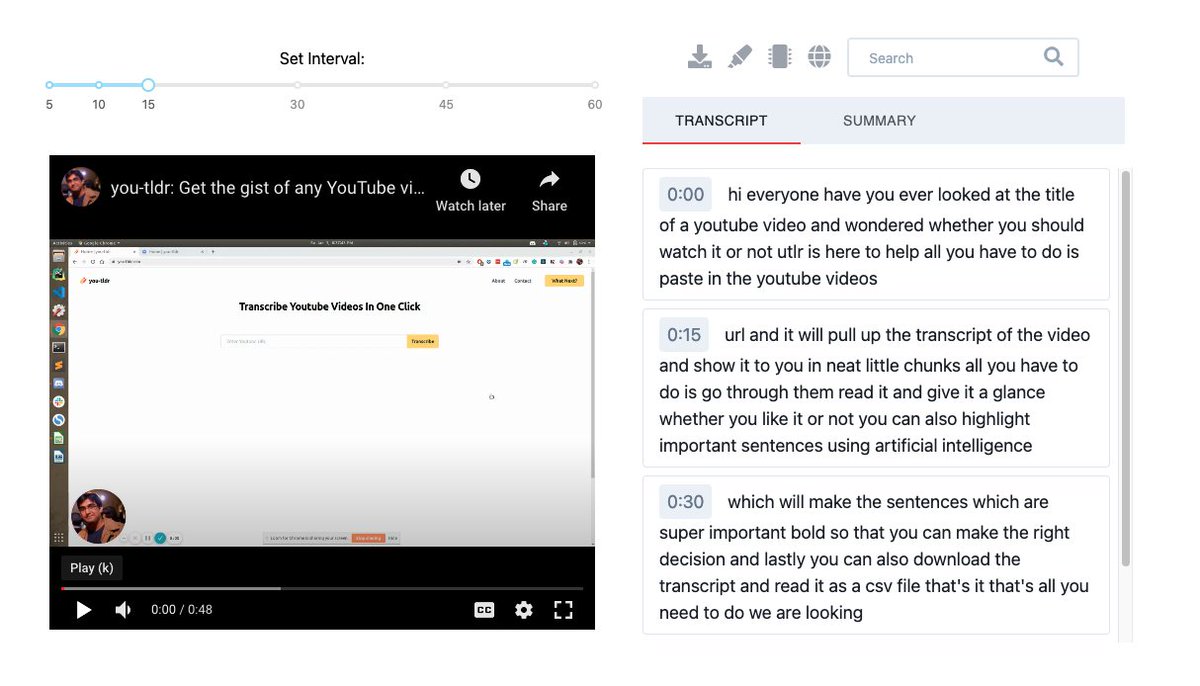

The third #OSINT tool is a web app called you-tldr; and it allows you to quickly scan the contents of a YouTube video including transcription, summaries, editing, etc. Includes timestamps in the transcription too!

you-tldr.com

(4/5)

you-tldr.com

(4/5)

Remember #OSINT != tools. Tools help you plan and collect data, but the end result of that tool is not OSINT. You have to analyze, verify, receive feedback, refine, and produce a final, actionable product of value before you can call it intelligence.

Thanks for reading!

(5/5)

Thanks for reading!

(5/5)

• • •

Missing some Tweet in this thread? You can try to

force a refresh