Sharing tips on preparing your presentation slides

Just attend many thesis presentations and qual exams at the end of the semester. I compiled some common pitfalls here and hopefully would be helpful to some.

Check out the thread 🧵below!

Just attend many thesis presentations and qual exams at the end of the semester. I compiled some common pitfalls here and hopefully would be helpful to some.

Check out the thread 🧵below!

*Outline*

I am surprised to see so many talks starting with the OUTLINE.

No one, literally no one, will be excited by the: "I will first introduce the problem, then I discuss related work, next I present our method, I show some results, and conclude the talk".

I am surprised to see so many talks starting with the OUTLINE.

No one, literally no one, will be excited by the: "I will first introduce the problem, then I discuss related work, next I present our method, I show some results, and conclude the talk".

*Be concise*

Do not treat your slides as a script.

Rule of thumbs for my students preparing a talk:

• Never write full sentences (unless quoting)

• Always write one-liners

• No more three lines of texts per slides

Do not treat your slides as a script.

Rule of thumbs for my students preparing a talk:

• Never write full sentences (unless quoting)

• Always write one-liners

• No more three lines of texts per slides

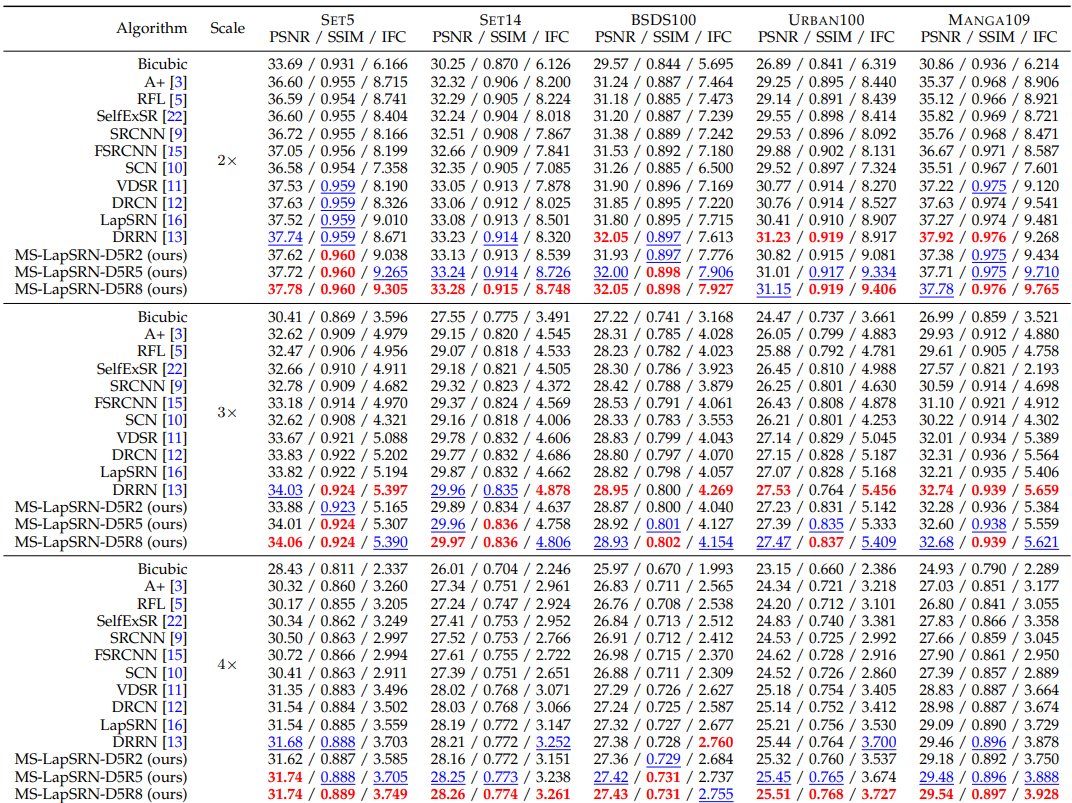

*Tables*

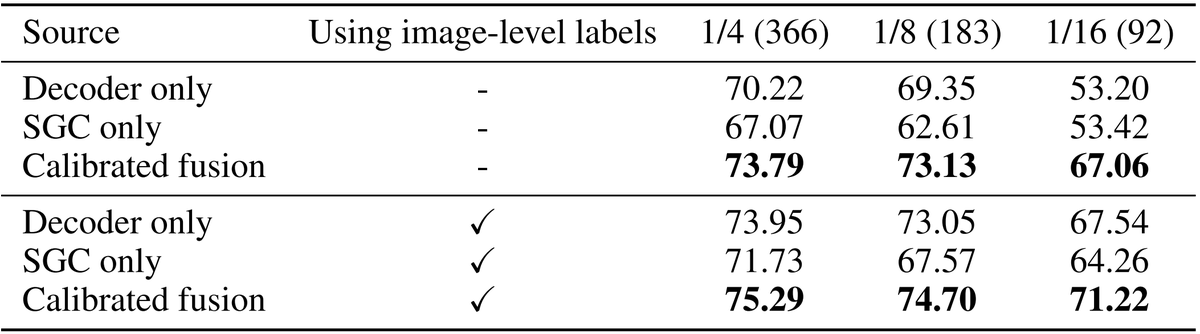

The tables in your paper/thesis are very informative with all the citations and compared methods. This is great. But, it's a disaster to present them as is in your talk.

No one knows what [17], [39] mean. Highlight and interpret the key results for your audience.

The tables in your paper/thesis are very informative with all the citations and compared methods. This is great. But, it's a disaster to present them as is in your talk.

No one knows what [17], [39] mean. Highlight and interpret the key results for your audience.

*Figures*

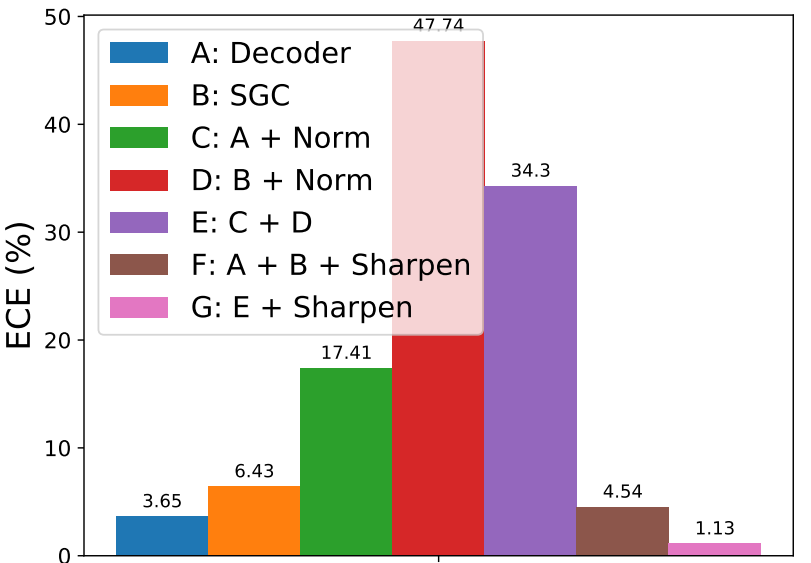

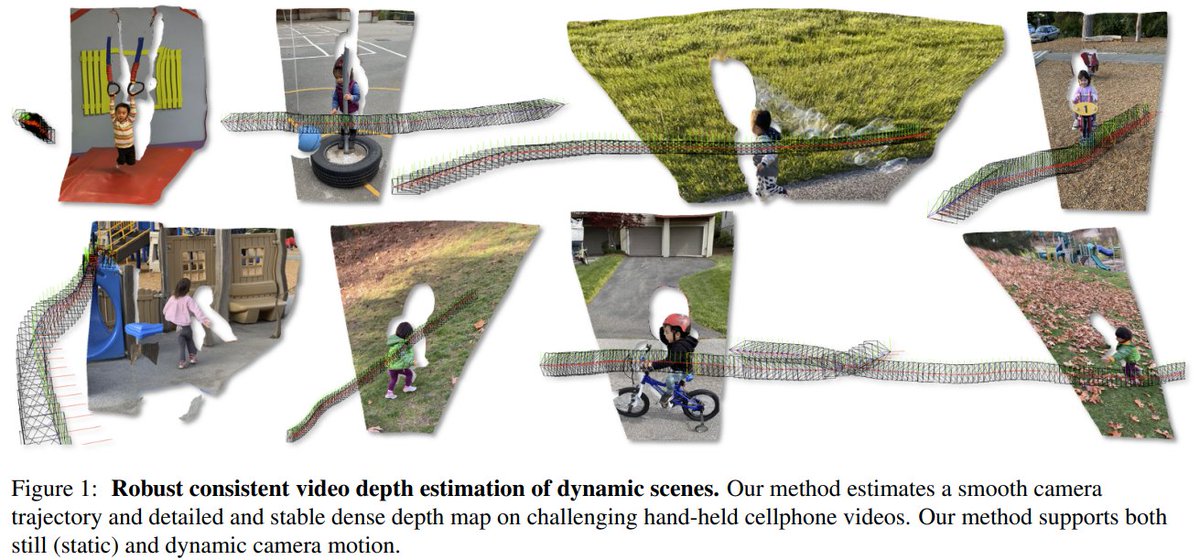

Explain how to read your plots. What does x-axis/y-axis mean? What do different lines mean? What can we learn from this plot?

If you plan to skip the discussion of some figures, just remove them.

Explain how to read your plots. What does x-axis/y-axis mean? What do different lines mean? What can we learn from this plot?

If you plan to skip the discussion of some figures, just remove them.

*Informative slides title*

Don't use the most salient part of slides to show "Results", "Visual comparison", "Ablation study"

The title should describe the TAKEAWAY message from that slide.

Don't use the most salient part of slides to show "Results", "Visual comparison", "Ablation study"

The title should describe the TAKEAWAY message from that slide.

*Final slide*

Avoid stoping at the "Thank you slide" in the end. Show the main results/conclusion/contributions of your work as your final slides. This helps remind people what you have done and helps them to ask good questions.

Avoid stoping at the "Thank you slide" in the end. Show the main results/conclusion/contributions of your work as your final slides. This helps remind people what you have done and helps them to ask good questions.

*Resources*

Many wonderful resources online. Check them out! A few pointers:

Patrick Henry Winston: ocw.mit.edu/resources/res-…

Matt Might: matt.might.net/articles/acade…

Kristen Grauman:

Many wonderful resources online. Check them out! A few pointers:

Patrick Henry Winston: ocw.mit.edu/resources/res-…

Matt Might: matt.might.net/articles/acade…

Kristen Grauman:

*Animation*

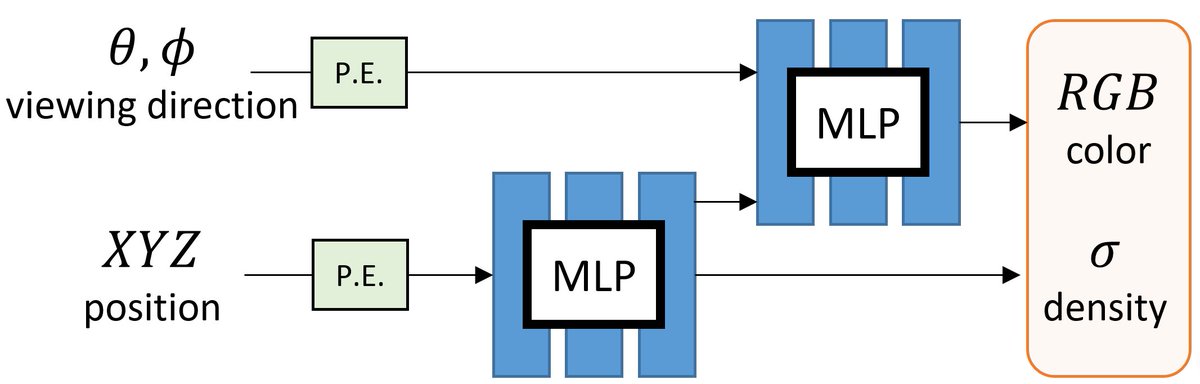

Use animation to break down a complicated diagram/figure/concept and describe them step by step.

When advancing the slides, make sure that all the components are perfectly aligned to reduce mental load.

Use animation to break down a complicated diagram/figure/concept and describe them step by step.

When advancing the slides, make sure that all the components are perfectly aligned to reduce mental load.

*Videos*

Insert the video (no YouTube embedding please) and use animation to control the timing to show up, play the video, and stop the video. Otherwise you may be busy trying to figure out where your cursor is to play the video during the talk.

Insert the video (no YouTube embedding please) and use animation to control the timing to show up, play the video, and stop the video. Otherwise you may be busy trying to figure out where your cursor is to play the video during the talk.

*Level of details*

Students tend to squeeze as much paper/thesis content as possible into the talk. This is understandable as all those are hard work.

But remember that your audience will be much happier to see a concise and clear talk.

Students tend to squeeze as much paper/thesis content as possible into the talk. This is understandable as all those are hard work.

But remember that your audience will be much happier to see a concise and clear talk.

*Pointer*

If you plan to point to some number/texts/figure in your slides, add an arrow/box/circle pointing to that (with animation). Don't use your mouse pointer.

Don't use a laser pointer for in-person talks as well. Nothing is more annoying than tracking a shaking red dot.

If you plan to point to some number/texts/figure in your slides, add an arrow/box/circle pointing to that (with animation). Don't use your mouse pointer.

Don't use a laser pointer for in-person talks as well. Nothing is more annoying than tracking a shaking red dot.

That's all (for now)! Happy presenting!

What are your favorite presentation tips (or the practice you hate the most)?

What are your favorite presentation tips (or the practice you hate the most)?

*Emphasis/De-emphasis*

When you want to emphasize/highlight some take-home message or important concepts, make sure to “de-emphasize” all the rest of contents as well.

How? Add a square blocking the contents with 10% transparency.

When you want to emphasize/highlight some take-home message or important concepts, make sure to “de-emphasize” all the rest of contents as well.

How? Add a square blocking the contents with 10% transparency.

• • •

Missing some Tweet in this thread? You can try to

force a refresh