NEW: 11 countries ink joint statement on countering commercial #spyware proliferation & abuse.

Cite "fundamental" national security & foreign policy interest 1/

🇦🇺#Australia 🇨🇦#Canada 🇨🇷#CostaRica 🇩🇰#Denmark 🇫🇷#France 🇳🇿#NewZealand 🇳🇴#Norway 🇸🇪#Sweden 🇨🇭#Switzerland 🇬🇧#UK 🇺🇸#US

Cite "fundamental" national security & foreign policy interest 1/

🇦🇺#Australia 🇨🇦#Canada 🇨🇷#CostaRica 🇩🇰#Denmark 🇫🇷#France 🇳🇿#NewZealand 🇳🇴#Norway 🇸🇪#Sweden 🇨🇭#Switzerland 🇬🇧#UK 🇺🇸#US

2/ I'd say the joint statement on commercial #spyware is unprecedented.

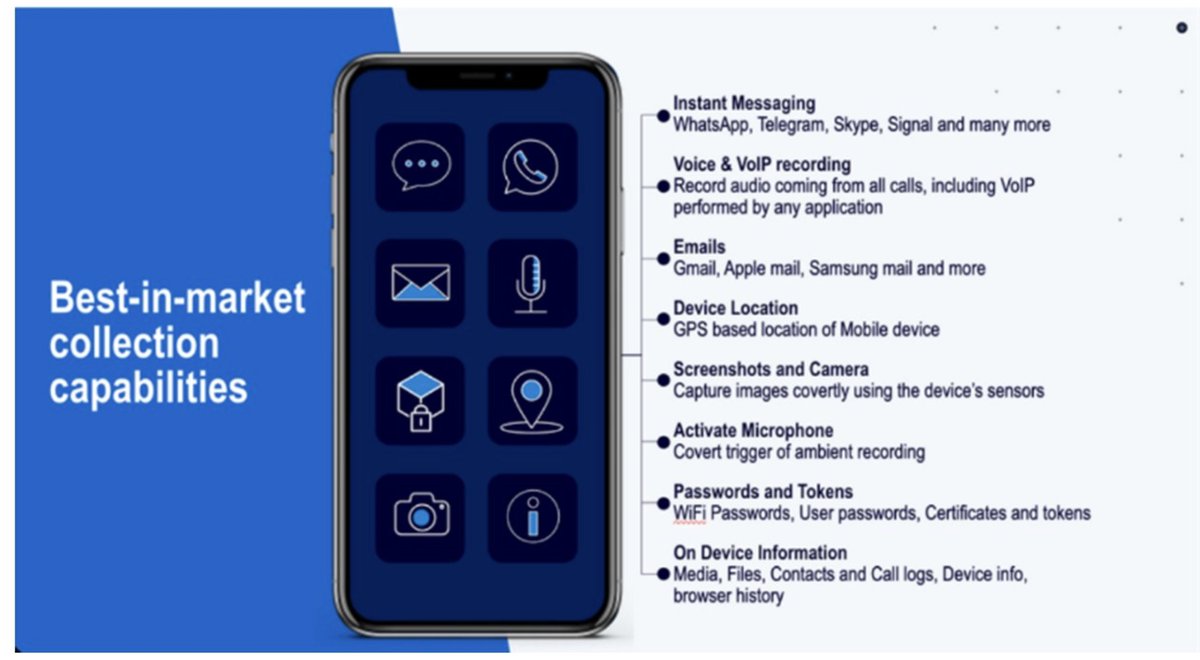

A few years ago spyware like #Pegasus was was treated as a human rights issue.

But the dizzying speed of proliferation made big problems for governments, forcing them to prepare positions & action.

A few years ago spyware like #Pegasus was was treated as a human rights issue.

But the dizzying speed of proliferation made big problems for governments, forcing them to prepare positions & action.

3/ The statement's commitment guardrails for accountable domestic #spyware use is important.

But devil will be in the implementations. Civil society will be watching.

(Note: issue wasn't covered in White House Spyware Executive Order on Monday, so nice to see USA commit here)

But devil will be in the implementations. Civil society will be watching.

(Note: issue wasn't covered in White House Spyware Executive Order on Monday, so nice to see USA commit here)

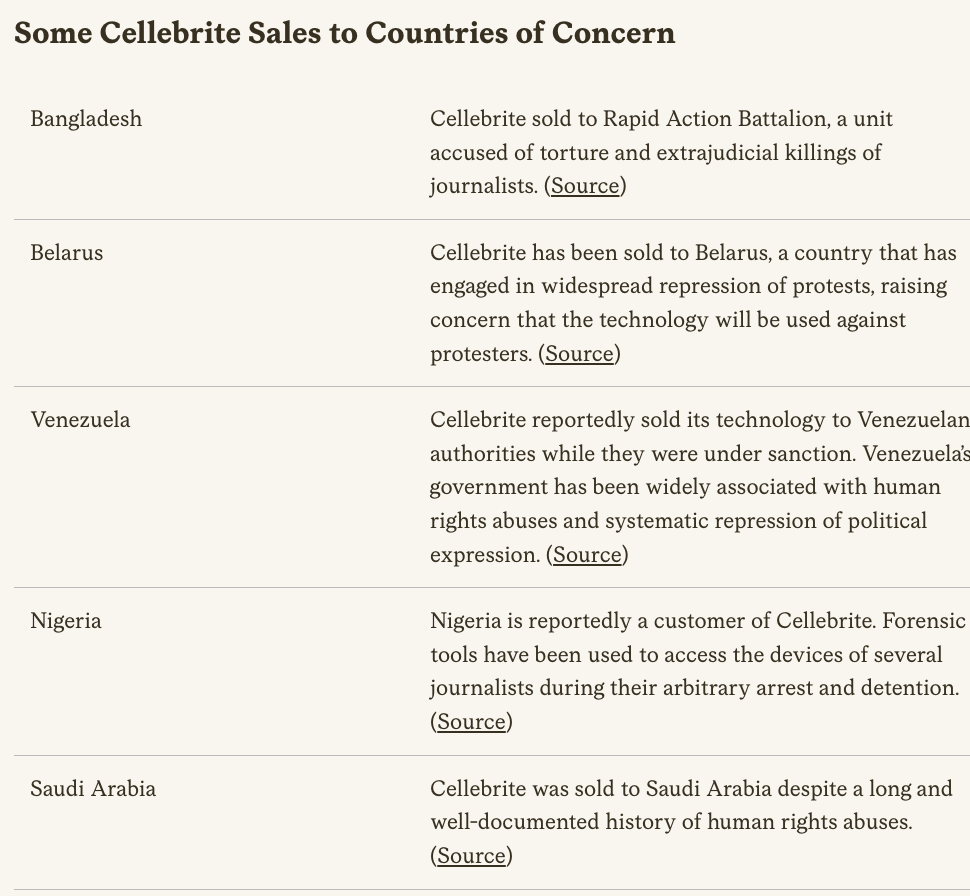

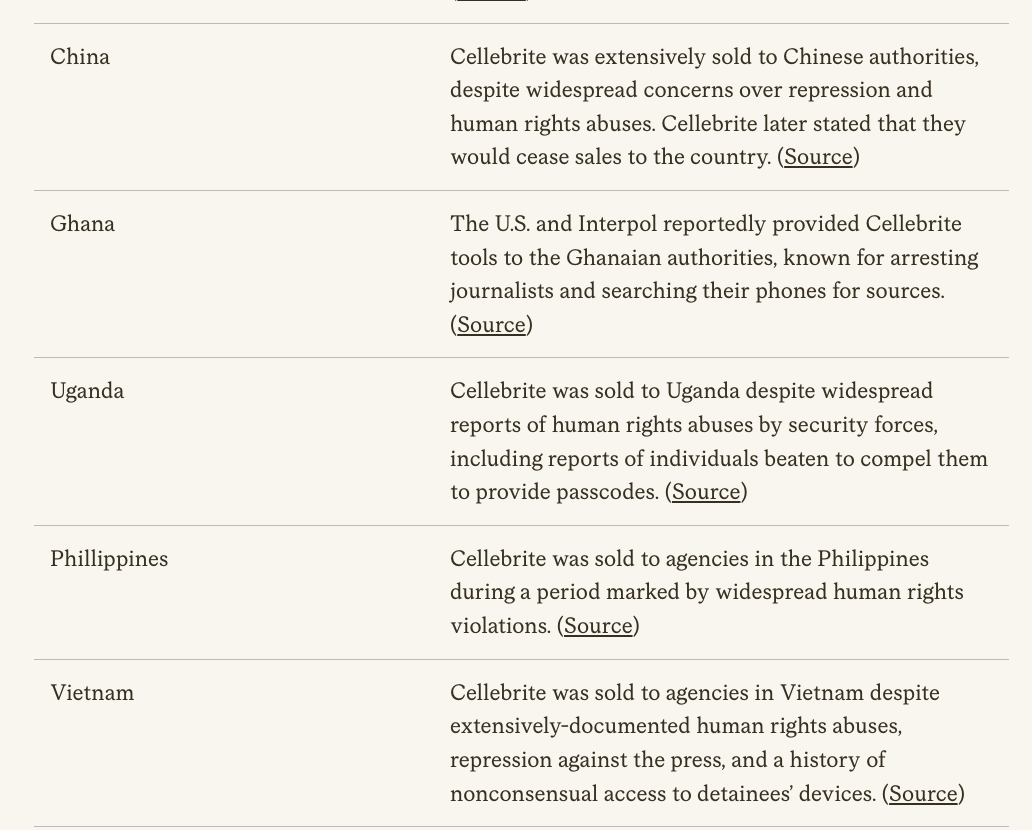

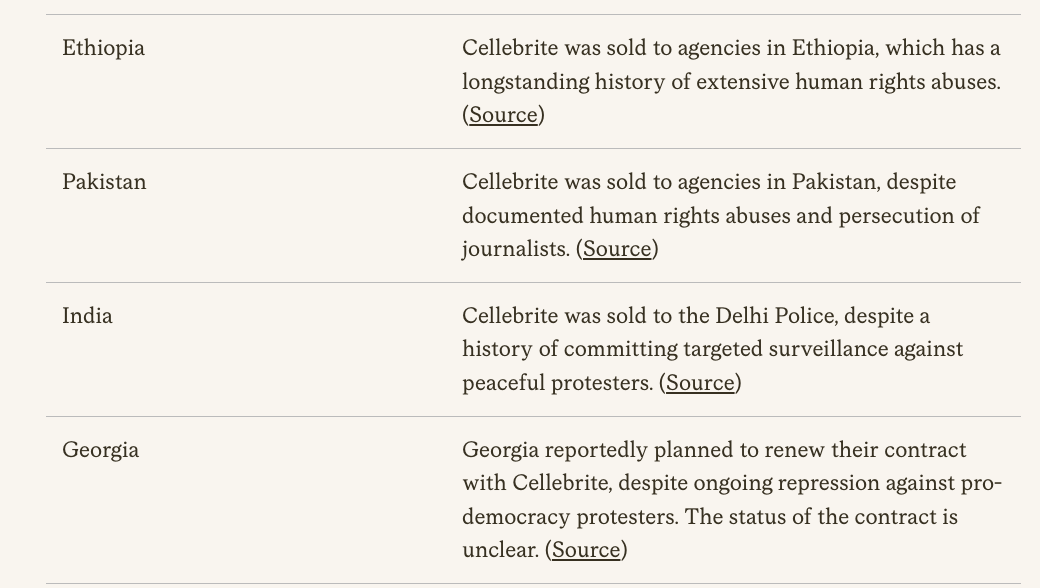

4/ Export control commitments on #Spyware. Again, important.

Worth noting, several signatories have a complex history on surveillance tech export...

So transparency about license granting & denials will be essential for accountability & to ensure commitment has teeth.

Worth noting, several signatories have a complex history on surveillance tech export...

So transparency about license granting & denials will be essential for accountability & to ensure commitment has teeth.

5/ Tracking & information sharing. Maybe public shaming? Norms? Again, important.

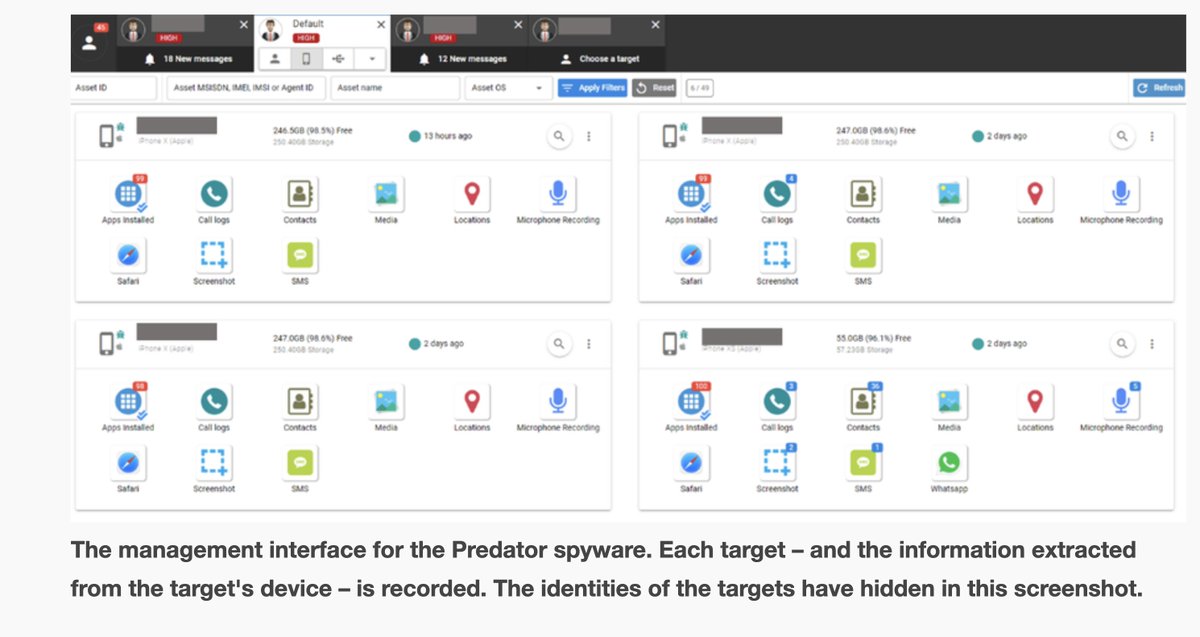

The mercenary #spyware industry has hidden from researchers & victims.

Let's hope it's harder for them to hide from governments.

The mercenary #spyware industry has hidden from researchers & victims.

Let's hope it's harder for them to hide from governments.

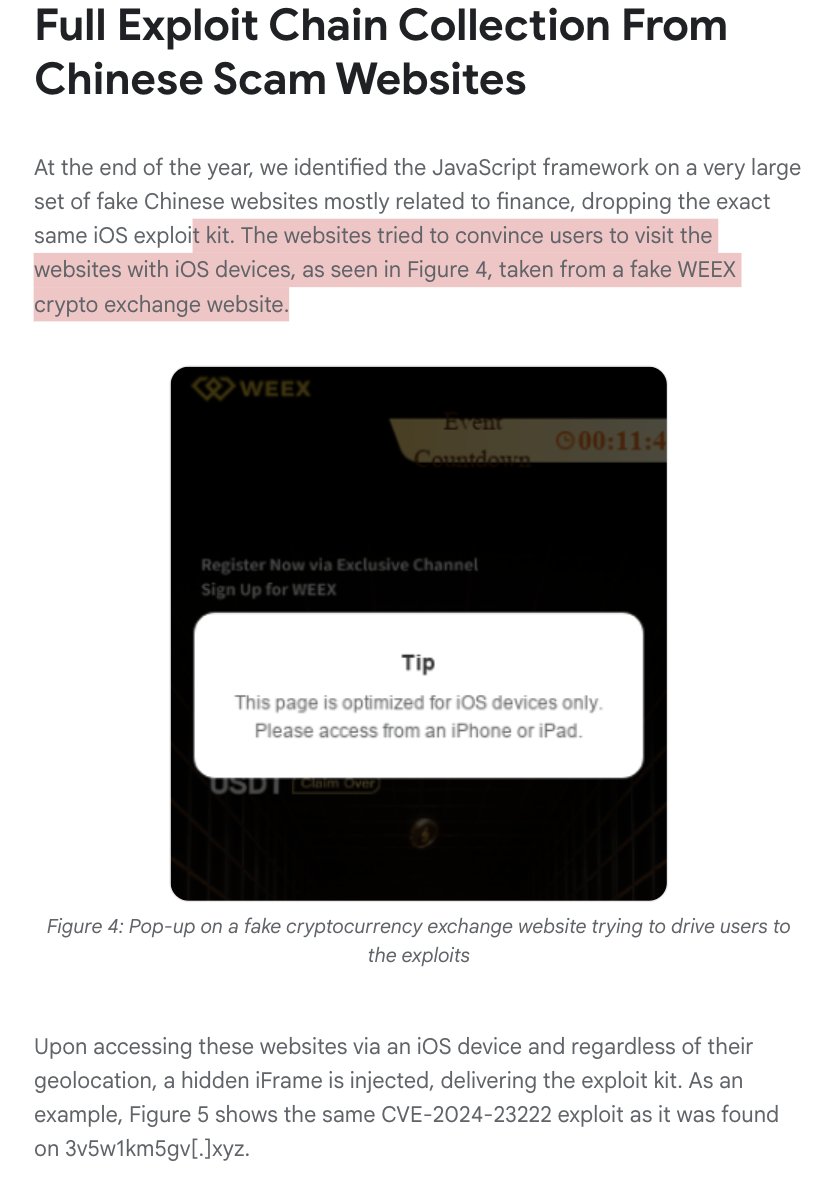

6/ Commercial #spyware proliferation is now a global problem. Whether it's sold to autocrats, or to more 'democratic' governments in the EU... that wind up abusing it

But a key driver? Investment firms in the US & elsewhere. Good to see the joint statement speak to this.

But a key driver? Investment firms in the US & elsewhere. Good to see the joint statement speak to this.

7/ Lots of movement on #spyware this week

- The Executive Order

- Statements by @POTUS & Deputy AG Lisa Monaco

- this Joint Statement

- & more, just look at this fact sheet

Positive developments that would have been unthinkable a few years ago, but...

whitehouse.gov/briefing-room/…

- The Executive Order

- Statements by @POTUS & Deputy AG Lisa Monaco

- this Joint Statement

- & more, just look at this fact sheet

Positive developments that would have been unthinkable a few years ago, but...

whitehouse.gov/briefing-room/…

8/ Spyware proliferation went too far & did too much harm.

Result? Governments are waking up & have started taking action.

But this is also a reminder of all the progress still needed on many fronts, like domestic accountability, oversight & transparency from every signatory.

Result? Governments are waking up & have started taking action.

But this is also a reminder of all the progress still needed on many fronts, like domestic accountability, oversight & transparency from every signatory.

9/ It remains puzzling to me as I read the joint statement on #Spyware that some EU countries are notably missing (where is #Germany?).

It also puts into stark relief that the EU Parliament's efforts on Spyware have a long way to go.

I hope there is some pressure to catch up!

It also puts into stark relief that the EU Parliament's efforts on Spyware have a long way to go.

I hope there is some pressure to catch up!

• • •

Missing some Tweet in this thread? You can try to

force a refresh