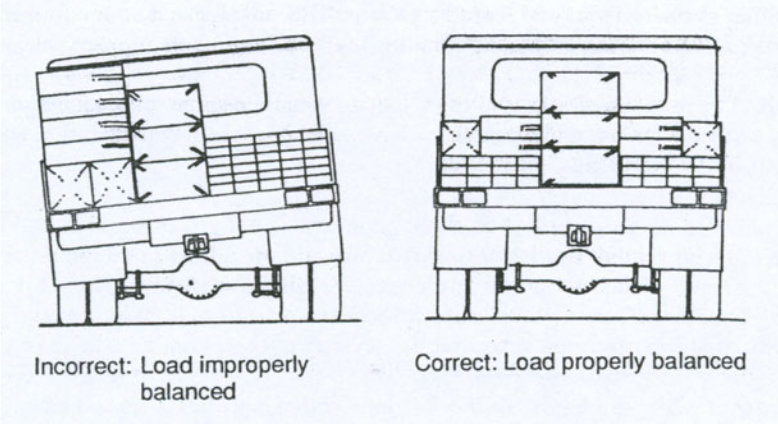

As a gatekeeper, our goal is to reduce that number in the future by only letting pass trucks that satisfy certain security measures and send them back and have them reloaded otherwise.

4/

For each truck we observe [L,R,Y].

7/

B and Y are independent in our toy example setup.

How can we as gatekeepers reduce the chances of roll over events? If we encounter a truck at the gate with B = 42, should we send it back for reloading and 2 boxes being removed? Or 14 being added?

11/

It may seem counter-intuitive given that B = L + R and that [L,R] indeed causes Y.

Yet, intervening on B by only letting trucks with certain value of B pass, does not effect Y!

12/

[L,R] & Y are dependent; B & Y aren't.

⇒ Finding a projection/transformation/macro-variable to be independent of Y does not imply that its micro constituents are independent of Y.

[G,H,…] can be dependent on/causing Y,

while f([G,H,…]) is not!

13/

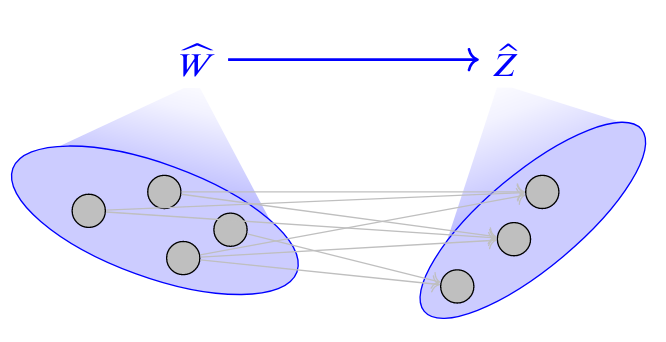

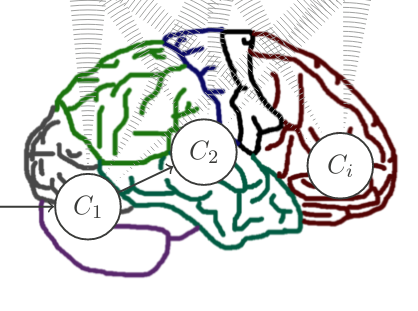

In imaging we measure low-d projections (or transformations in the terminology of auai.org/uai2017/procee…) of a high-d underlying neural level.

Surely, information is lost and not all signal captured!

14/

A: "average neural firing rate in region X" ("number of boxes")

is independent of

B: "arm movement" ("truck rolled over").

Can we conclude that

1) A doesn't cause B? /y

2) "firings of neurons in region X" ("number of boxes left/right") don't cause B? /n

Thoughts?