🧵 Global catastrophic and existential risks: the weightiest complex phenomena?

"Our job is to think about how humanity can have a happy ending...about how GOOD it can be."

- @anderssandberg of @FHIOxford speaking at SFI today:

#AIEthics #Biosecurity

"Our job is to think about how humanity can have a happy ending...about how GOOD it can be."

- @anderssandberg of @FHIOxford speaking at SFI today:

#AIEthics #Biosecurity

"The idea that humanity actually *could* go extinct required a lot of ideas to come together. First you needed to know that species go extinct. This wasn't clear until [the discovery of fossil Mastodons]."

- @anderssandberg of @FHIOxford at SFI today:

- @anderssandberg of @FHIOxford at SFI today:

"It's not just that the end of the world could be caused by a bad political decision. In principle, the *right* political decision or technology could *avert* these risks. In the 19th C, it was a considered merely a matter of natural causes."

- @anderssandberg (@FHIOxford) at SFI

- @anderssandberg (@FHIOxford) at SFI

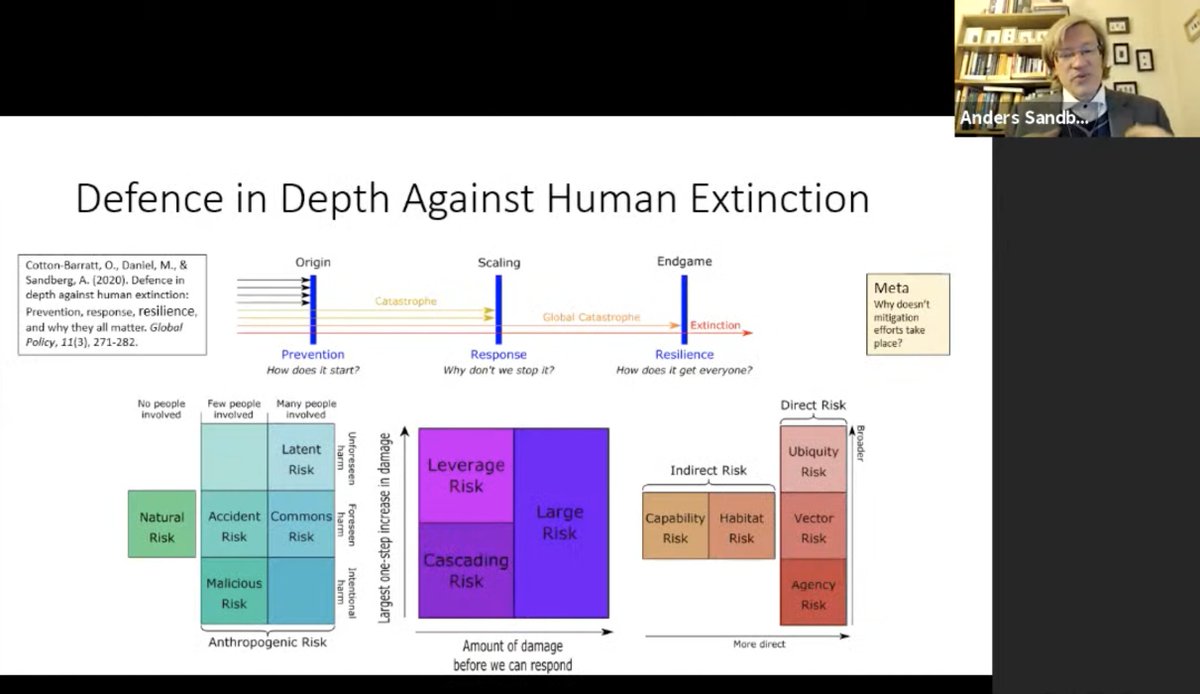

"There are also trans-generational risks, and there may be pan-generational risks."

- @anderssandberg (@FHIOxford) speaking at SFI on a taxonomy of #ExistentialRisk — and how to avert it:

- @anderssandberg (@FHIOxford) speaking at SFI on a taxonomy of #ExistentialRisk — and how to avert it:

"[Humans] care about continuity. Many of the things we do don't make sense unless we care about our children and our children's children, etc. And it's important to keep our options open...it's very hard to go un-extinct."

- @anderssandberg (@FHIOxford):

- @anderssandberg (@FHIOxford):

"The asteroid: in many ways, it's the nicest form of #ExistentialRisk because it's well understood. We have data that tells us how likely they are, we have the big dinosaur killers mapped out. This is the most well-managed existential risk we have."

- @anderssandberg (@FHIOxford)

- @anderssandberg (@FHIOxford)

"Most people will say it's unlikely that a #pandemic could wipe out an entire species because as people die out, the pathogen can't spread. Unfortunately, amphibians prove otherwise, because immune populations can act as a reservoir."

- @anderssandberg (@FHIOxford) at SFI now

- @anderssandberg (@FHIOxford) at SFI now

"#ClimateChange will make things hard, but it won't be the end of the world. What worries me is the systemic #risk: a world struggling with the effects of climate change will be less-prepared to deal with other things that come up."

- @anderssandberg (@FHIOxford) at SFI now

- @anderssandberg (@FHIOxford) at SFI now

"During the Middle Ages, you couldn't have a war that could kill everybody. It's only become possible over the last century...a 'beautiful' demonstration of how an otherwise-intelligent species can place itself in trouble."

- @anderssandberg (@FHIOxford):

- @anderssandberg (@FHIOxford):

"Unlike the @LosAlamosNatLab physicists, the @CERN LHC physicists responded way too late [to justify the safety of their experiments]. ... Ideally, with these low-probability risks, you want several overlapping arguments."

- @anderssandberg (@FHIOxford):

- @anderssandberg (@FHIOxford):

"It doesn't necessarily have a scintillating conversation partner to be risky. If you have systems that can autonomously change the world, that's a risk for us."

- @anderssandberg (@FHIOxford) with a nod to @_nickbostrom's comments on #AI/#AGI:

- @anderssandberg (@FHIOxford) with a nod to @_nickbostrom's comments on #AI/#AGI:

"It's not necessarily that we can't do something to [avert a given risk]. It's that we have incentives *not* to do something."

- @anderssandberg (@FHIOxford)

- @anderssandberg (@FHIOxford)

How to avert a catastrophic cascading failure in food production: @anderssandberg (@FHIOxford) works on the board of @ALLFEDALLIANCE to devise transitional strategies to bridge major disruptions.

"Most of these global risks are complex systems problems."

"Most of these global risks are complex systems problems."

Betweenness centrality, heavy tail distributions, emergent effects, correlations between infrastructural layers, and other nonlinearities make catastrophic risk a perfect area of study for #ComplexSystems science.

@anderssandberg (@FHIOxford) at SFI now:

@anderssandberg (@FHIOxford) at SFI now:

• • •

Missing some Tweet in this thread? You can try to

force a refresh