What's Data Availability Sampling (DAS), and how does it factor into a modular blockchain? Let's break it down.#ETH #Ethereum #web3 #blockchain #development

🧵 👇

🧵 👇

Data availability is an important area of active research. In order to scale a blockchain, the data needs to be both stored efficiently and retrievable for nodes (which may not be able to store the entire blockchain state).

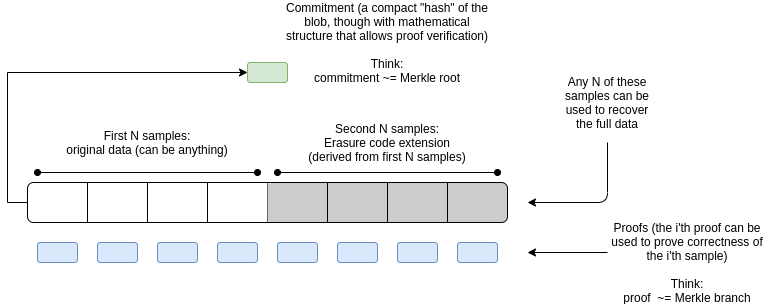

Data is stored in blobs, which are made up of the original data, extended data, and proofs.

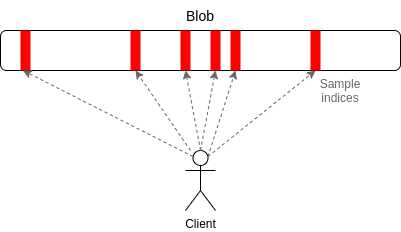

A client samples a small piece of the blob at random and attempts to reconstruct the entire blob. If 51% of the data is available, it means that the entire blob exists. This is a low-bandwidth way to determine the complete DA of any given blob.

How do we determine that a bad actor isn't just publishing 51% (or more) of the data and censoring the rest? The answer is erasure coding. Using erasure coding, we can build blobs that can be reconstructed as long as 51% of the given blob exists.

If this is the case, the client would reconstruct the blob, and rebroadcast it to the network.

Using DAS allows for increased scalability and throughput. This is just one piece of the puzzle in a modular blockchain stack, but DAS eliminates a major bottleneck existing today.

Follow so you won't miss more explanatory threads!

(graphics by

@VitalikButerin

)

Follow so you won't miss more explanatory threads!

(graphics by

@VitalikButerin

)

• • •

Missing some Tweet in this thread? You can try to

force a refresh