Short answer: yes, certainly, but because the people doing it are only human, not (generally) because they are doing a bad job.

Longer answer below.

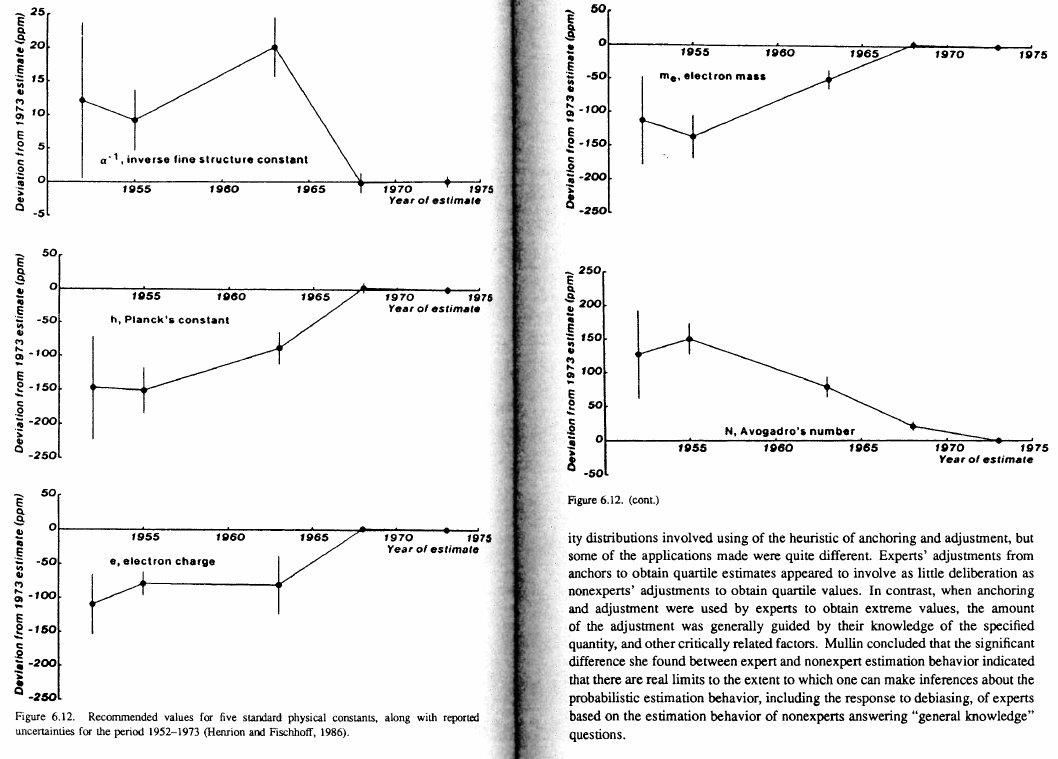

And studies show that this overconfidence applies to those that are good/bad at math, and on topics both familiar and foreign.

Is that groupthink? No. It just means that experts can agree on the most likely answer, even if that answer is not likely to be correct.