It was a long journey, but we have finally published our paper at @JPAM_DC. I’m really happy to see it on the journal’s website.

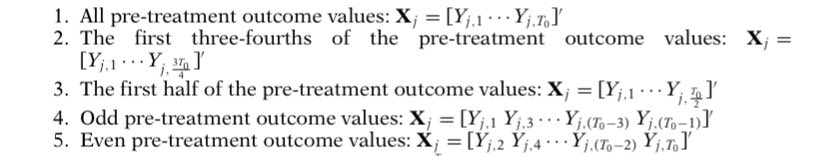

2) If you need to include covariates, use only specs that are included in our theoretical results. They are much more robust and stable.

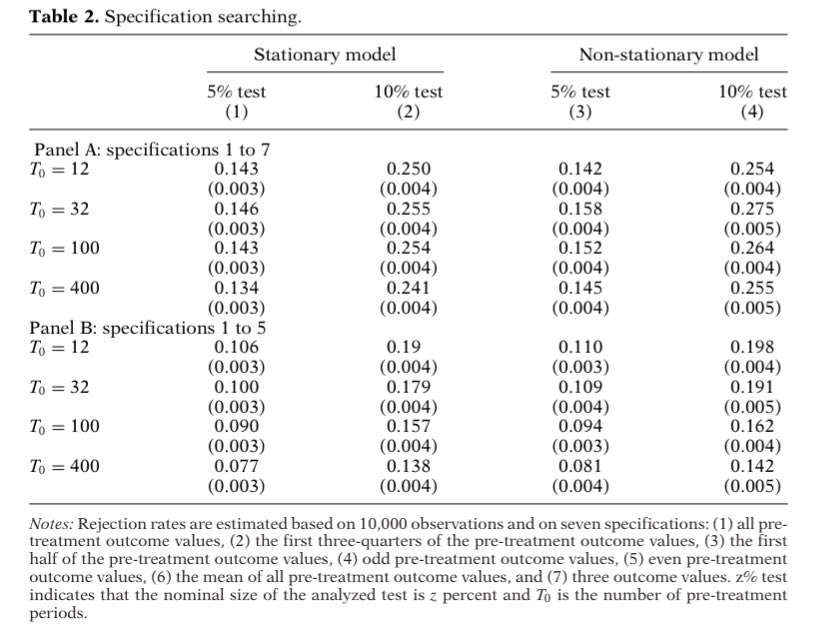

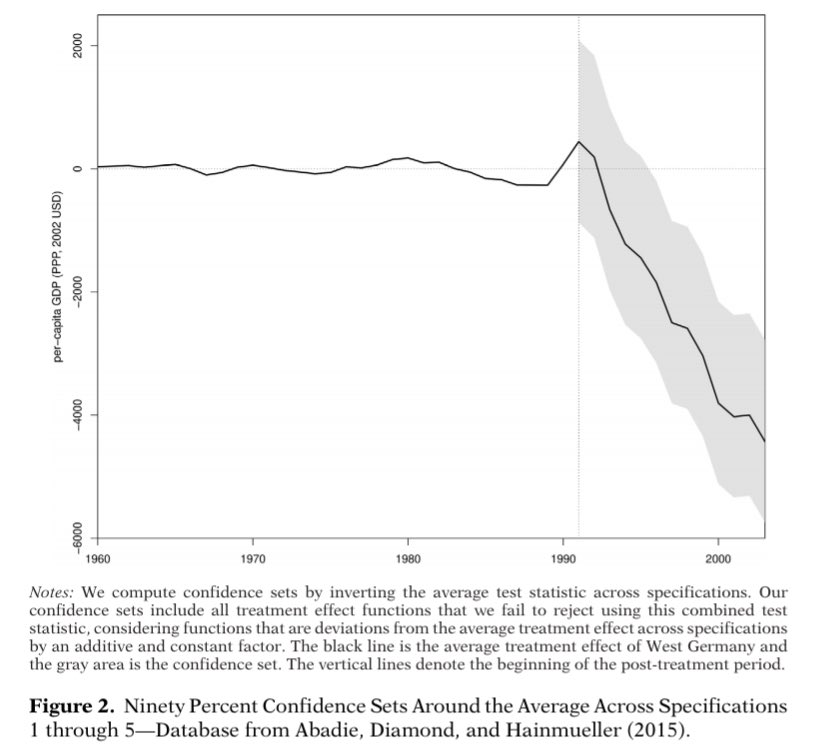

4) Inference should account for the use of more than one spec. Combine all of them in one test statistic to control size.